When you learn about SQL Server, you will often hear that a commit transaction is a synchronous operation and that you can trust it. In this blog post, I will provide some details about what we mean by synchronous behavior. The reason is that sometimes, when I talk about the new delayed durability feature provided by SQL Server 2014, there are some confusions. If you want more details on this new feature, please read the blog post of my colleague Stéphane Haby here. A quick shortcut is often the following: writing to the transaction log is synchronous, while writing with the new delayed durability feature is asynchronous.

First of all, you probably know that the buffer manager guarantees that the transaction log is written before the changes to the database are written. This is the famous protocol called Write-Ahead logging (aka WAL). Log records are not written directly to disk but first into the buffer cache and then flushed to the disk in a purely asynchronous manner. However, at the commit time the related thread must wait for the writes to complete to the point of the commit log record in the transaction log. This is the synchronous part of commit operation in order to meet the WAL protocol.

On the other hand, the new delayed durability feature allows the commit operation to be asynchronous (like writing to the transaction) but the big difference is that the related thread doesn’t have to wait until the commit log record is written in the transaction log. This new feature introduces some performance improvements, but as a caveat, there is the loss of data.

We can prove that both commit operations write asynchronously by using either the process monitor tool or by using a debugger and trying to catch the part of the code responsible for writing into the transaction log file.

I will use the following T-SQL script for this demonstration:

–> Commit transaction (without delayed durability option)

–> Commit transaction (with delayed durability enabled)

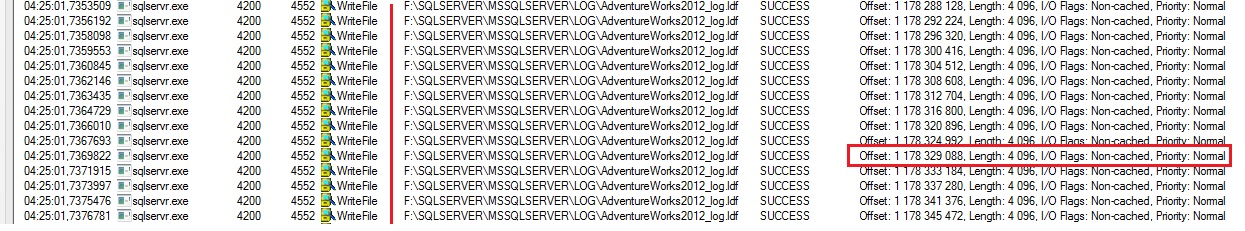

Below, you will find an interesting picture of the process monitor trace output that shows the SQL Server file system activity that writes to the transaction log file in both cases.

–> Commit transaction (without delayed durability option)

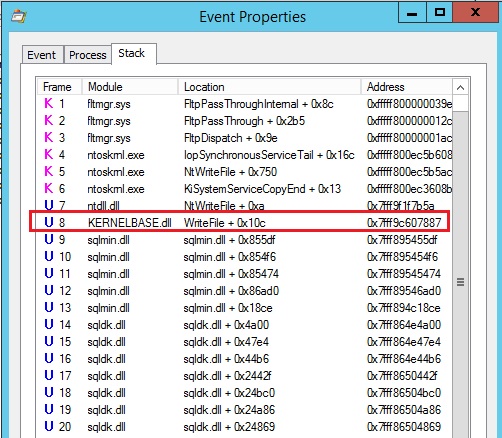

You will notice that SQL Server uses the WriteFile() function to write to the transaction log for each commit operation (4096 bytes each). I will only show you a sample of the output, but you can imagine the final number of records you can have here. If we take a look at the process monitor stack you will notice that SQL Server uses the WriteFile() Windows function located in the Kernel32.lib library to write to the transaction log with an overlapped structure (in others words asynchronous I/O).

This test confirms what Bob Dorr explains in the Microsoft article about SQL Server I/Os and transaction log I/O.

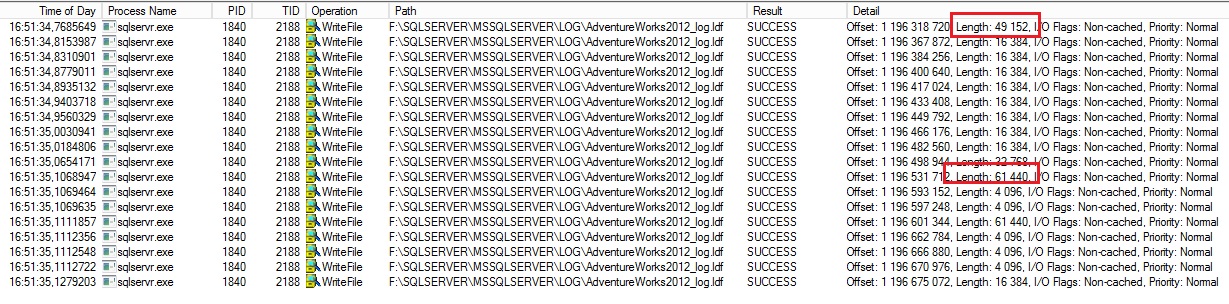

–> Commit transaction (with delayed durability enabled)

In this case, the same function is used by SQL Server with a big difference here: SQL Server will group some IO into chunks (in my case 16K, 48K, and 60K) before writing to disk. Cleary, there is less activity here (in my case 18 lines against approximatively 1000 lines for the first test).

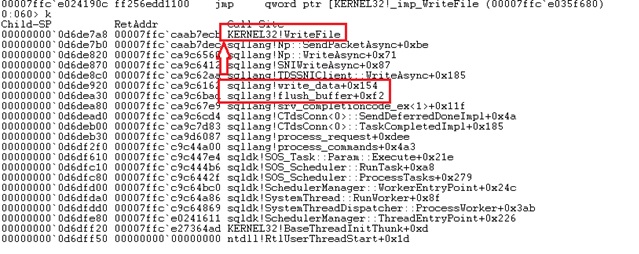

We can also attach a debugger (for instance WinDbg) to the SQL Server process and set a breakpoint in the Kernel32!writefile() function for the calling thread in order to have more details about the execution stack. Note that the process monitor stack showed the module KERNELBASE.dll for the WriteFile() function but as mentioned by this Microsoft article kernelbase.dll gets functionality from kernel32.dll and advapi32.dll.

Both commit operations show the same stack except of course the number of executions.

To summarize, I wanted to show you that both commit operations (with and without delayed duration) are using asynchronous IO to write to the transaction log file. The big difference is that with the delayed durability option, SQL Server improves the log IO writes performance by deferring and grouping the IO into 60K chunks before writing to the disk. I hope this will help you understand more about SQL Server commit operations.

By David Barbarin

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/OLS_web-min-scaled.jpg)