You might know that there are many JVMs available today, but do you know the difference between them? In this blog I will not state all JVMs as it will take too much time 🙂 But I will talk about Hotspot and JRockit, because they are pretty used and because they will eventually merge in Java 8! That’s what they say at least…

What’s a JVM?

Well this is a reminder and you might want to jump to the next chapter! A JVM is a Java Virtual Machine: When coding in java, you code in a high level language that the device can’t understand. Usually, you compile your code into machine understandable binaries.

But when you compile a java program, it generates an intermediate code which is called byte-code. It looks like assembly language when extracted with javap. In fact the JVM interpretes this byte code on the fly and transforms it into machine code, depending on which distribution you use.

In addition, it manages the runtime of your application, the memory, threads, etc.

Why use different JVMs?

We could also ask why different cars? Merely because of several needs. By changing JVMs implementations, you can build it for a specific need. For instance, for the big picture, the hotspot is optimised for client application purposes whereas JRockit is better for server purposes with long running processes. Note that JRockit is the default JVM that comes with Oracle Weblogic Server and Hotspot is the default one for Java JDK/JRE.

Hotspot vs JRockit

As told before, the Hotspot JVM is better suited for UI desktop applications whereas JRockit is made for best performance and fast executions. Let’s see the differences in details.

Architecture

I already said that JVMs interprets the generated byte code – that’s true for Hotspot, but not for JRockit. For Hotspot, each time a function is called, it will translate the byte code into machine code and execute it. Even if the method is called a lot of times the process will be executed.

JRockit is a bit different, it uses the JIT (Just In Time) mechanism. When a function is called it compiles the byte code and saves in memory this compiled machine code in case it is called again, in order to speed up the execution.

But note that with this mechanism JRockit uses more memory space to work and the JVM takes longer to start up due to all methods saving.

Optimizations

JRockit can do runtime optimizations while Hotspot can’t. JRockit can identify code called “hot spots” in order to speed up its execution and lower resources consumption. It queues most called methods to execute them faster, it also condenses some methods that it has identified. But note that the process it uses to ensure optimization is really complex and is often the root cause of many JVM crashes. It can be disabled, so use it carefully.

JRockit can act on JVM parameters to fit the application needs. For example, it can update the TLA (Thread Local Area) size when an OOM occurs (Out Of Memory), which is 2k by default. By acting on parameters it can speed up and adapt the behavior of the JVM to fit the application needs. JRockit scans itself to find out what it can optimize.

Memory architecture

JVMs mostly work with generational memory structures. That means that the life cycle of an object defines its position in memory.

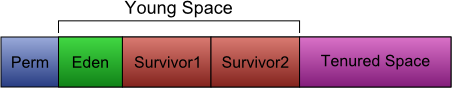

The Hotspot JVM is split into 4 spaces:

- Perm: the permanent space, where classes’ binaries are loaded

- Eden: where new objects are instantiated (in young space)

- Survivor space: where objects are sent when they survived a minor collection (in young space)

- Tenured space: where objects are promoted when old enough

The JRockit JVM is generally split into 2 spaces:

- Nursery: almost same as Hotspot’s young space

- Old space: almost same as Hotspot’s tenured space

Note that it can also be a one space heap.

Garbage collector

You can choose different garbage collector types for the Hotspot VM depending on which type of application you are running, whereas only one type is available for JRockit with several modes.

I will not cover each specificity of the Hotspot garbage collections in this article since I will do it in another dedicated one. Instead, I will only talk about JRockit garbage collector (GC) modes and tuning.

JRockit uses the Mark-and-Sweep model in Mostly Concurrent (equivalent to Hotspot’s CMS) or Parallel strategy. The Mostly Concurrent Mark-and-Sweep GC is really optimized for application server behavior as it improves reactivity by lowering pause time and raising throughput.

- Mostly Concurrent Strategy: most of GC phases are running aside the application, that means the application is not totally stopped

- Parallel Strategy: each processor is used to execute the GC as fast as possible but there is a STW (Stop The World) which means that the application is totally stopped during the whole GC

- Both strategies can be mixed

Additionally a GC mode can be selected for JRockit. These are the main options:

- Throughput (default): Optimizes the GC for maximum application throughput

- Pausetime: Optimizes the GC for short and even pause time

- Deterministic (only available for JRockit Real Time): Optimizes the GC for very short and deterministic pause time

You can choose the GC mode by specifying the -Xgc: argument.

- -Xgc:throughput

- -Xgc:pausetime

- -Xgc:deterministic

- -Xgc:gencon Two generations in Mostly Concurrent

- -Xgc:genpar Two generations in Parallel

- -Xgc:singlecon One generation in Mostly Concurrent

- -Xgc:signlepar One generation in Parallel

Additionally, you can specify a target pause time that the GC will try to follow with -XpauseTarget:200. Here is a tuning tip:

Is your application sensitive to long GC pauses (500ms or more)?

- Yes: use gencon or singlecon

- No: use genpar or singlepar

Does your application allocate a lot of temp objects?

- Yes: use gencon or genpar

- No: use signlecon or singlepar

A lot of tuning options are available and they differ from Hotspot to JRockit – have a look at Oracle documentation for further information.

Performance monitoring

Concerning monitoring tools, Hotspot offers JConsole which allows the monitoring of memory, CPU, threads and identifies memory leaks. But it remains rather crude. JRockit provides JRMC (JRockit Mission Control) which is a composed environment of advanced monitoring and analyzing tools. It allows recording JVM behavior in order to analyze it afterwards. You can check JVM options and arguments, GC collections details, methods using the maximum of memory, memory leaks, etc. JRockit embeds a lot of features that simplify the monitoring through external tools without disrupting JVM performances.

Which one should I use?

It definitely depends on the application you want to run. Here is a summary of when to use them:

Hotspot

- Desktop application

- UI (swing) based application

- Desktop daemon

- Fast starting JVM

JRockit

- Java application server

- High performance application

- Need of a full monitoring environment

In future releases of the Hotspot JVM, JRockit tends to merge with Hotspot to produce a “best-of-breed JVM” – internally called HotRockit.

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/05/Middleware-TO_Final-wpcf_173x250.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/OLS_web-min-scaled.jpg)