In this post I’d like to share what we did for a customer who had the following requirements:

- Use Oracle GoldenGate

- Upgrade an Oracle 10.2.0.4 database running on Solaris 10 SPARC to Oracle 12.1.0.2 running on Exadata

- Keep the downtime as small as possible

- Minimize the impact on the production database as much as possible

I will not describe why GoldenGate was the product of choice nor will I describe how we verified that Golgengate actually can be used for what we planned to do. That (maybe) will be the topic of another post.

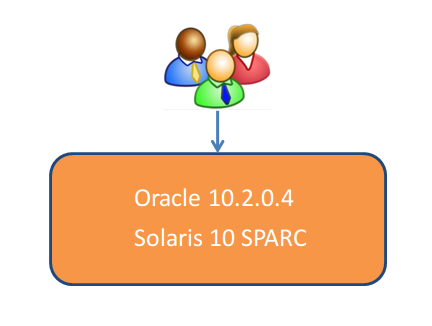

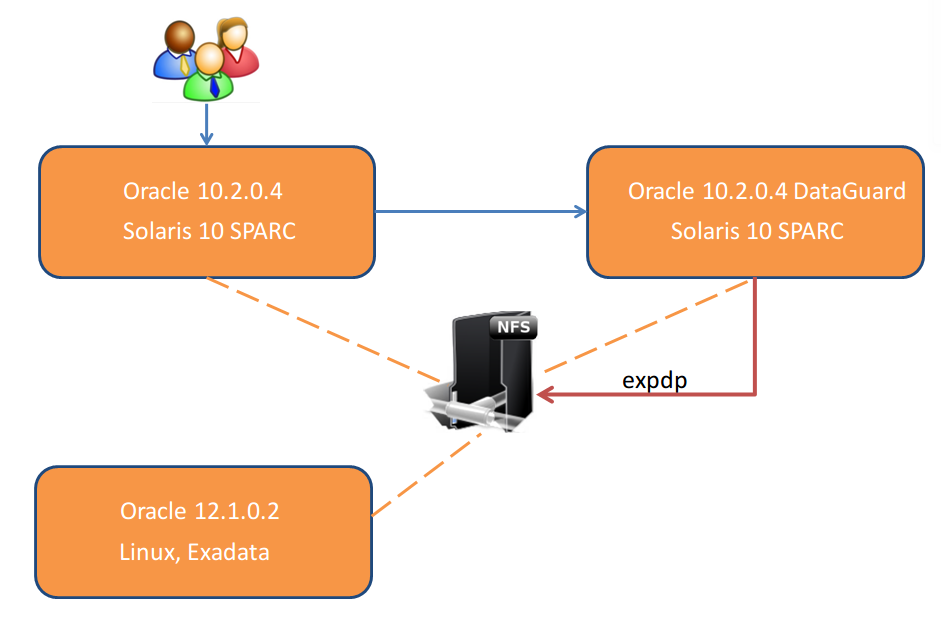

The staring point was (as always) that all the users are working on the production 10.2.0.4 database:

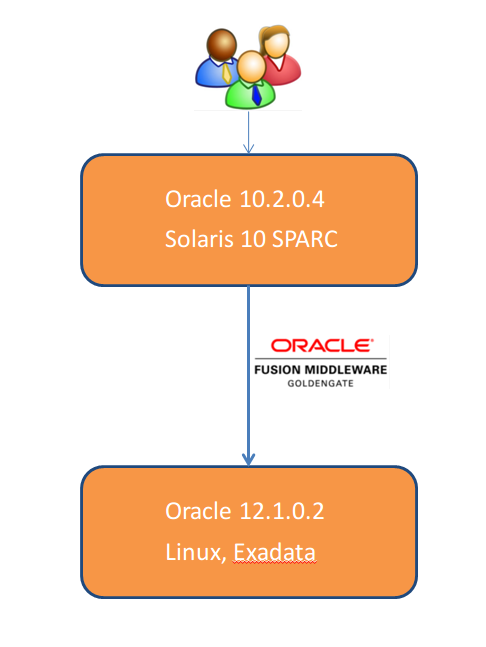

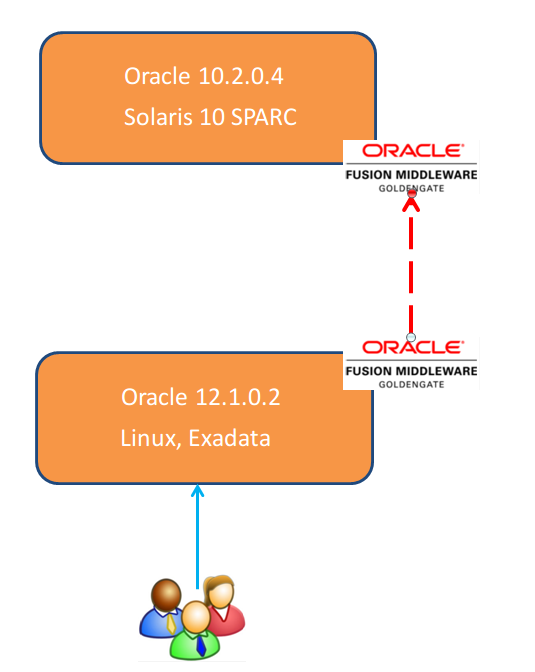

The simplified solution would be to build a GoldenGate stream between the source and the target …

… at the day of the go live switch the users …

… shutdown the GoldenGate stream and decommission the old database once everything is fine … ha!!!, easy, isn’t it?

Well, is was not soooo easy as we had two very important constraints:

- We were not allowed to cause any additional load on the 10.2.0.4 production database

- We were not allowed to install anything on the 10.2.0.4 production database

How can we do an initial load of the target database when we are not allowed to generate any additional load on the source? An expdp of a 4TB database for sure will cause load and we needed to do the export as fast as possible because:

- expdp must be done with a flashback_scn for starting the replicat at the target and because of this…

- we need to make sure that we have a high enough undo_retention and a big enough undo tablespace

Short answer if we had asked to do the export directly on production:

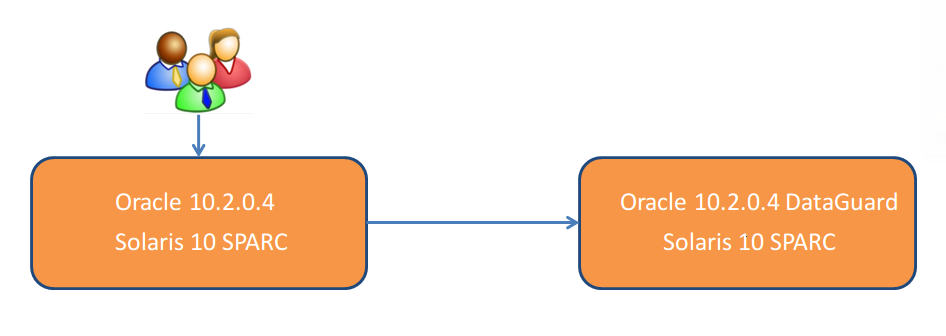

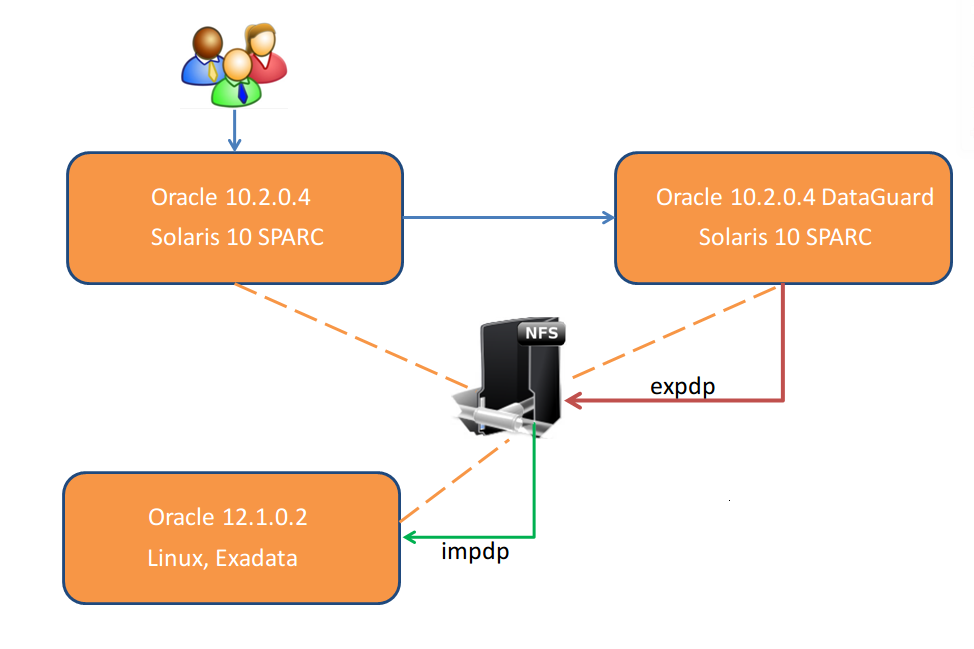

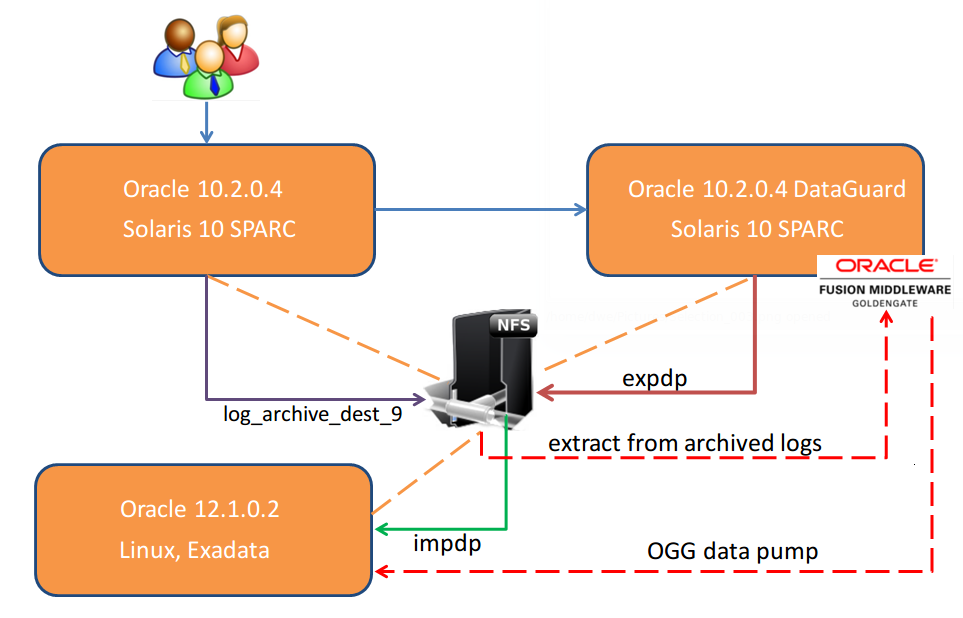

What we did to offload the export from production is to build a DataGuard on another server so the picture now looks like this:

Wait, can you do a expdp on a 10.2.0.4 standby database? No. So what we had to do is to create a guaranteed restore point, break the DataGuard, do the export as fast as possible and then re-synchronize the standby database. The re-synchronization was essential because we wanted to do the same thing over and over again if we’d need to fix issues and do a fresh initial load. Btw: the export was done to a NFS share which was mounted to the standby system, the production system and the target system:

From the same NFS we were able to initially load the target database:

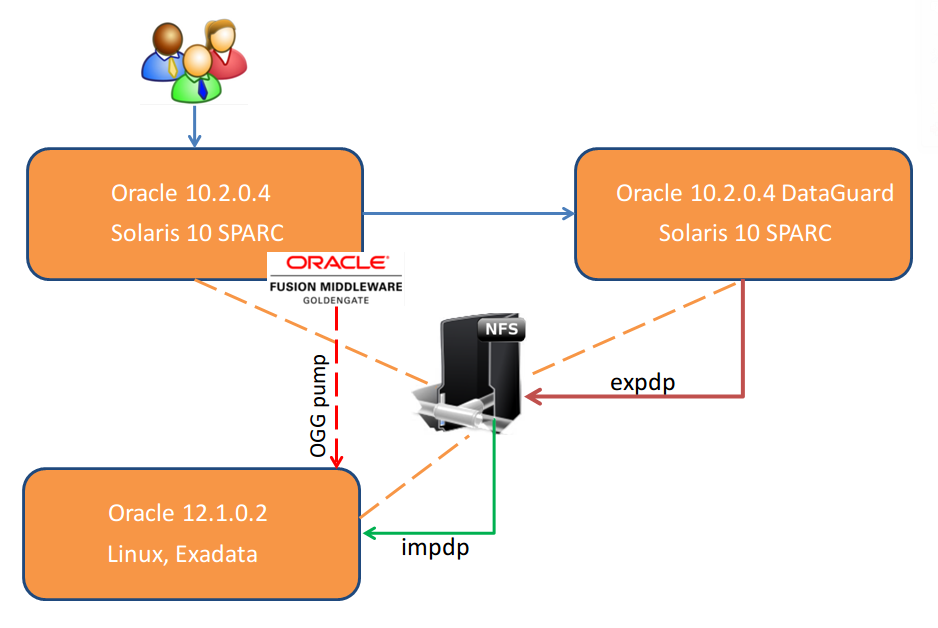

Wait, isn’t there something missing? Yes. Of course we started the GoldenGate extracts on the source before we started the export on the standby server:

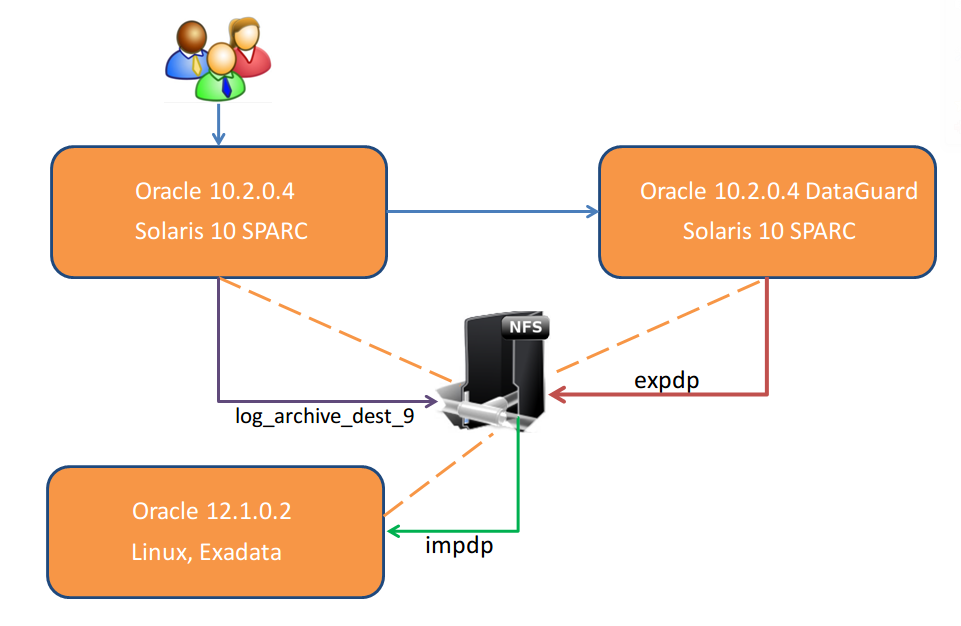

Didn’t I say we were not allowed to install anything on the source? So the picture above is not right. What we did instead is to let the source send the archived logs to another destination which is on the same NFS share:

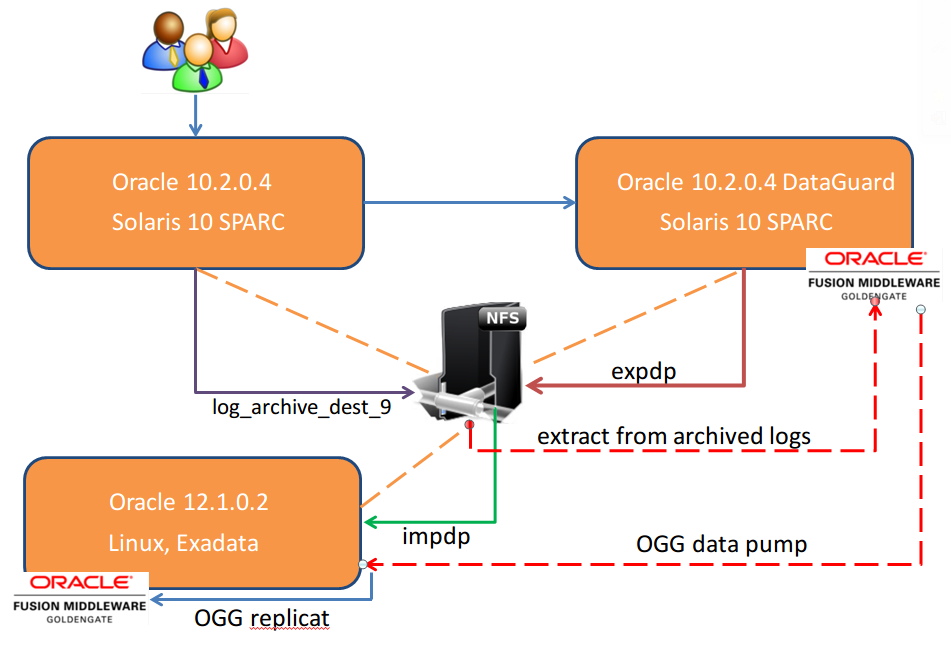

Having the archived redo logs available on the NFS we installed GoldenGate on the standby system having it read the archived logs from there and then pump the extracts to the target:

The last remaining bit was to setup GoldenGate on the target and let it replicate the OGG pumps which come from the standby system:

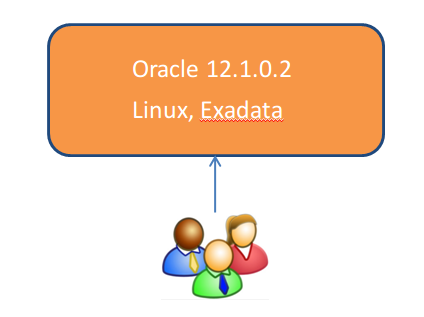

On the day of the go live the users/application switched and everybody is happy:

Really? How can we recover from that if for whatever reason the users/applications must be switched back? This is not an issue if nothing happens on the target database but usually for deciding on a go/no modifications do occur. Restoring the database was not an option as it would have been too time consuming so we did this:

When the application was shutdown on the old production database but before it was brought only on the new target database we switched the streams. From now on the new system was replicating to the old system and we had a safe way back.

I know many technical details are missing here but this was not the scope of this post. The scope was to outline a way to do a almost zero downtime migration using Oracle GoldenGate with minimal impact on the source database and the possibility to switch back to the old system if required. Hope this helps ….

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)