I never wrote about data platform solutions on Azure so far. The fact is in Switzerland we’re definitely late about the Cloud adoption and Azure data platform solutions. There are different reasons that are more or less valid but I don’t want to contribute to any debate here. In any case the last announcements in this field with Azure data centers in Switzerland could encourage customers to reconsider this topic in the near future. Don’t get me wrong here, it doesn’t mean that customers in Switzerland must move all their database infrastructures in the Cloud but this is just an opportunity for them to consider rearchitecting some pieces of their information system including databases that may lead to hybrid scenarios. It will be likely the first step to the cloud adoption for data platforms solutions. At dbi services we didn’t exclude Azure from our target but we just silently continued to keep an eye on the data platform stack awaiting the right opportunity to move up a gear.

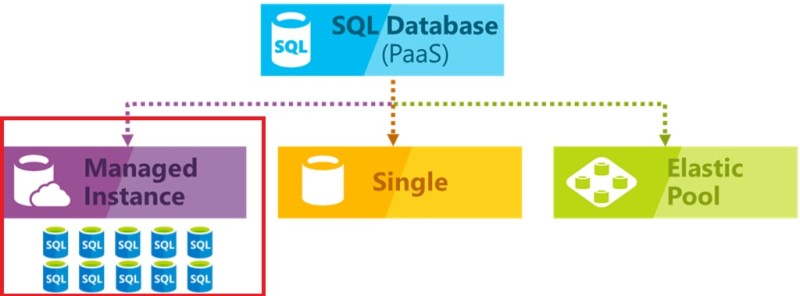

Why to begin with SQL managed instances (MI)? After all, this feature is still on preview and it already exists a lot of Azure solutions as Azure SQL Databases (singleton and with elastic pool as well) as well as SQL Server on Azure VMs.

The point is this new feature is interesting in many aspects. Firstly, it will address the gap that currently exists between IaaS infrastructures (SQL Server VMs) and fully-managed services (with Azure SQL DBs). The former still requires maintaining the operating system (and licenses) while the latter didn’t expose all the feature surface needed by various application scenarios.

At the same time, Microsoft introduced another purchasing model that based on VCore. I remembered a discussion with one of my customers sometimes ago about DTUs. He asked me what is exactly DTU and I pointed out the following sentence from the Microsoft documentation.

The amount of resources is calculated as a number of Database Transaction Units or DTUs and is a bundled measure of compute, storage, and IO resources

That is definitely a good way to simply resource management because it makes an abstraction of the physical resources but this is probably it weakness in some degrees. Indeed, how to translate what DBAs and infrastructure team usually manage from on-premises to the cloud? Obviously, Microsoft provided a calculator to help customers to address their questions before moving to the cloud but the fact is database administrators seem to not be comfortable to deal with DTUs . But now let’s talk about flexibility: in many databases scenarios we don’t want to increase / decrease resources in the same bundle but we want to get a better control of the resource configuration by dissociating compute (CPU / RAM) from the storage. From my experience, I had never seen one customer to scale compute and storage in the same manner regarding their workload. Indeed, some workloads require high-performance storage while others are more CPU-bound. This is where the new vCore-based model comes into play by and I believe it will get a better adoption from customers to smoothly move to the cloud. That’s at least my opinion!

So, let’s try to play with MI. As a reminder, currently it is in preview but that’s enough to get a picture of what you may expect in the future. In my demo, I will use intensively CLI tools with dedicated PowerShell cmdlets and mssql-cli as well. This is voluntary because the fact is more and more administration tasks are done in this way and Microsoft provided all the commands to achieve them.

[dab@DBI-LT-DAB:#]> Get-AzureRmResourceGroup -Name sql-mi-rg ResourceGroupName : sql-mi-rg Location : westeurope ProvisioningState : Succeeded Tags : ResourceId : /subscriptions/913528f5-f1f8-4d61-af86-30f2eb0839ba/resourceGroups/sql-mi-rg [dab@DBI-LT-DAB:#]> Get-AzureRmResource -ResourceGroupName sql-mi-rg | ft Name, ResourceType, Location -AutoSize Name ResourceType Location ---- ------------ -------- sql-mi-client_OsDisk_1_842d669310b04cbd8352962c4bda5889 Microsoft.Compute/disks westeurope sql-mi-client Microsoft.Compute/virtualMachines westeurope shutdown-computevm-sql-mi-client Microsoft.DevTestLab/schedules westeurope sql-mi-client453 Microsoft.Network/networkInterfaces westeurope sql-mi-client-nsg Microsoft.Network/networkSecurityGroups westeurope sqlmiclientnsg675 Microsoft.Network/networkSecurityGroups westeurope sql-mi-client-ip Microsoft.Network/publicIPAddresses westeurope sqlmiclientip853 Microsoft.Network/publicIPAddresses westeurope sql-mi-routetable Microsoft.Network/routeTables westeurope sql-mi-vnet Microsoft.Network/virtualNetworks westeurope sql-mi-dbi Microsoft.Sql/managedInstances westeurope sql-mi-dbi/ApplixEnterprise Microsoft.Sql/managedInstances/databases westeurope sql-mi-dbi/dbi_tools Microsoft.Sql/managedInstances/databases westeurope VirtualClustersql-mi-subnet Microsoft.Sql/virtualClusters westeurope sqlmirgdiag947 Microsoft.Storage/storageAccounts westeurope

My MI is composed of difference resources including:

- VirtualClustersql-mi-subnet – a logical container of managed instances?

- sql-mi-dbi as managed instance

- sql-mi-dbi/ApplixEnterprise and sql-mi-dbi/dbi_tools as managed databases.

- Network components including sql-mi-vnet, sql-mi-routetable

Here some more details of my MI:

[dab@DBI-LT-DAB:#]> Get-AzureRmSqlManagedInstance | ft ManagedInstanceName, Location, ResourceGroupName, LicenseType, VCores, StorageSizeInGB -AutoSize ManagedInstanceName Location ResourceGroupName LicenseType VCores StorageSizeInGB ------------------- -------- ----------------- ----------- ------ --------------- sql-mi-dbi westeurope sql-mi-rg LicenseIncluded 8 32

I picked up a GEN4 configuration based on General Purpose pricing that includes 8 VCores and 32GB of storage.

My managed databases are as follows:

[dab@DBI-LT-DAB:#]> Get-AzureRmSqlManagedDatabase -ManagedInstanceName sql-mi-dbi -ResourceGroupName sql-mi-rg | ft Name, ManagedInstanceName, Location, DefaultSecondaryLoc ation, Status, Collation -AutoSize Name ManagedInstanceName Location DefaultSecondaryLocation Status Collation ---- ------------------- -------- ------------------------ ------ --------- dbi_tools sql-mi-dbi westeurope northeurope Online Latin1_General_CS_AS_KS ApplixEnterprise sql-mi-dbi westeurope northeurope Online SQL_Latin1_General_CP1_CI_AS

Other resources are related to my other virtual client machine to connect to my MI. Indeed, the latter is not exposed through a public endpoint and it is reachable only from an internal network. I didn’t setup a site-to-site VPN to connect the MI from my remote laptop.

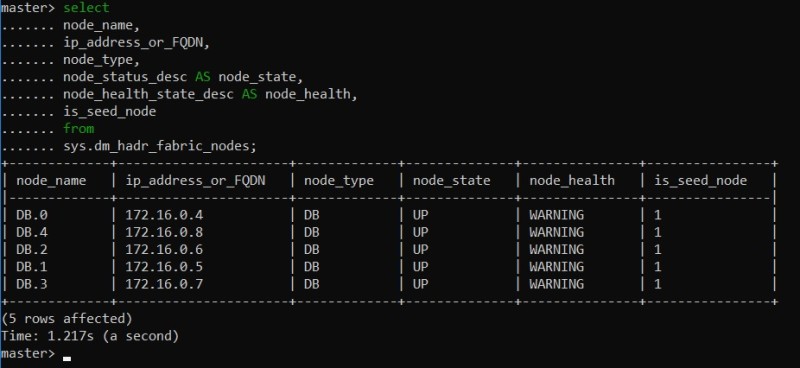

Another point that drew my attention is the high availability feature which is based on remote storage and Azure Service Fabric.

Do you remember of the VirtualClustersql-mi-subnet described earlier? In fact, my MI is built upon a service fabric. Referring to the Microsoft documentation a Service Fabric enables you to build and manage scalable and reliable applications composed of microservices that run at high density on a shared pool of machines, which is referred to as a cluster.

We may get a picture of this underlying cluster from a set of dedicated sys.dm_hadr_fabric_* DMVs with a high-level view of the underlying cluster …

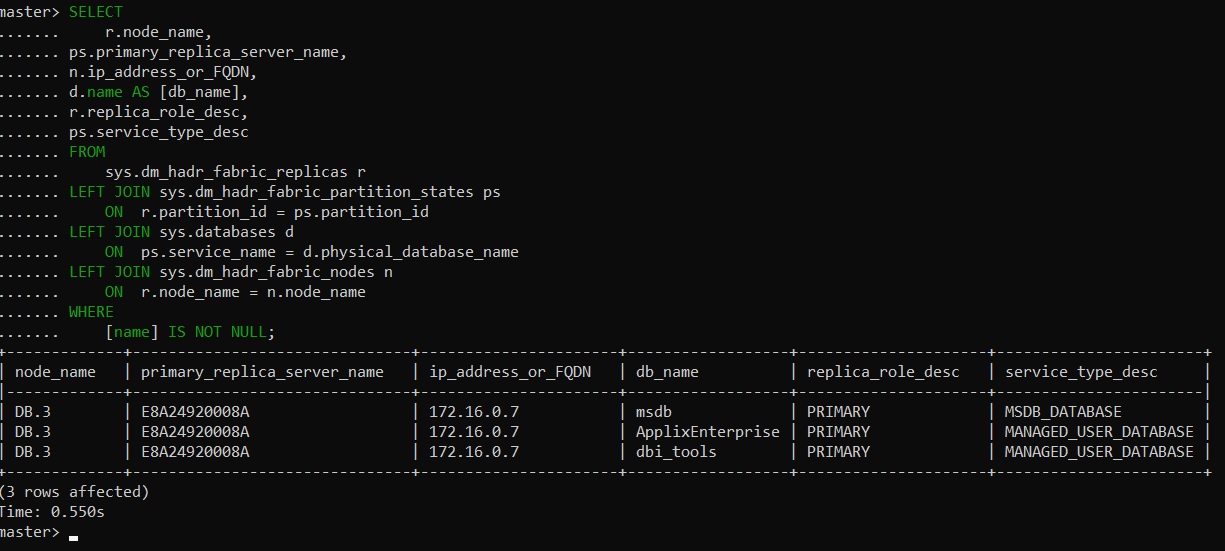

… and a more detailed view including my managed databases:

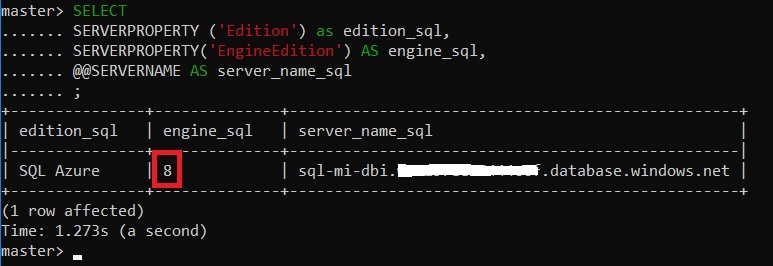

Now let’s get basic information from my MI:

The MI version may be easily identified by the engine_sql number equal to 8.

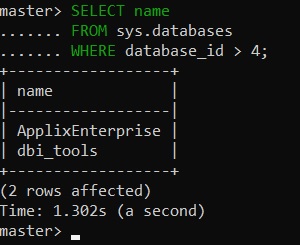

As said previously I have two user databases that exist and they were restored from backups hosted on my blob storage container.

Here an example of commands I used to restore them. You probably recognize the native RESTORE FROM URL syntax. Note also that you have different ways to restore / migrate your databases from on-premises environment with BACPAC and Azure Database Migration Service as well.

RESTORE FILELISTONLY FROM URL = 'https://mikedavemstorage.blob.core.windows.net/backup/ApplixEnterprise2014.bak' RESTORE DATABASE [ApplixEnterprise] FROM URL = 'https://mikedavemstorage.blob.core.windows.net/backup/ApplixEnterprise2014.bak';

Here a list of my existing user databases:

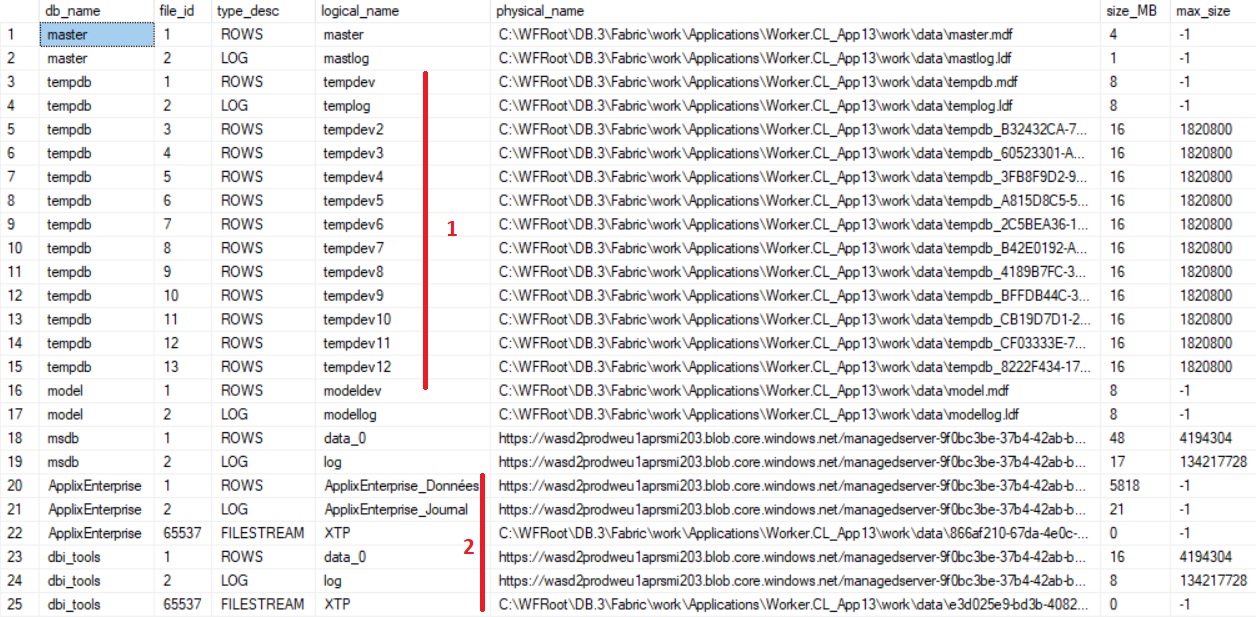

Let’s go further with database files configuration:

SELECT DB_NAME(database_id) AS [db_name], file_id, type_desc, name AS [logical_name], physical_name, state_desc AS [state], size / 128 AS size_MB, max_size FROM sys.master_files; GO

Some interesting points here:

1 – tempdb is pre-configured with 12 data files and 16MB each? Probably far from our usualk recommendation but anyway the preview allows to change it by using DBA usual scripts.

2- We may also notice that the user databases are placed on a different storage types (premium disk according to the Microsoft documentation). System databases are hosted to a local path C:\WFRoot\DB 3\Fabric\work\data\ as well as well the tempdb database. I use a standard tier meaning that system DBs are all on an attached SSD included in the VCore price.

Just for fun, I tried to install our DMK maintenance tool which basically creates a dbi_tools database with maintenance objects (tables and stored procedures) and related SQL Server agent jobs. A databasemail configuration step is also part of the DMK installation and the good news is the feature is available with MIs. However, I quickly ran into was about some ALTER DATABASE commands we use at the beginning of the deployment script:

Msg 5008, Level 16, State 14, Line 72 This ALTER DATABASE statement is not supported. Correct the syntax and execute the statement again. Msg 5069, Level 16, State 1, Line 72 ALTER DATABASE statement failed. Msg 5008, Level 16, State 14, Line 89 This ALTER DATABASE statement is not supported. Correct the syntax and execute the statement again. Msg 5069, Level 16, State 1, Line 89 ALTER DATABASE statement failed.

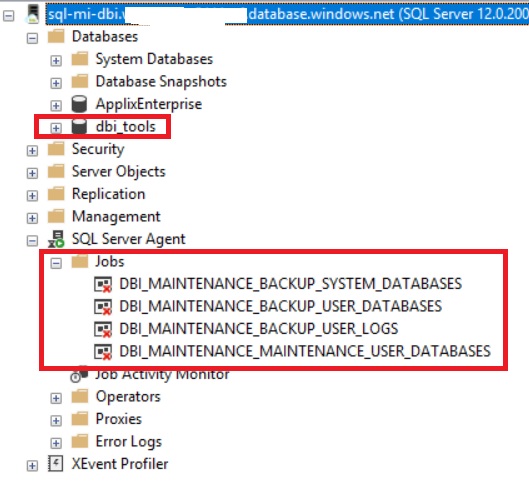

The fix was quite easy and I finally managed to deploy the tool as show below:

The next step consisted in testing our different maintenance tasks:

- Database integrity check task

- Index maintenance task

- Update statistics maintenance task

- Backup task

The first 3 tasks worked well without any modification. However, for backups, I needed to used URL-based backups because it is the only method supported so far. Unfortunately, the current version of our DMK maintenance tool doesn’t not handled correctly it does shared access signatures that come with BACKUP TO URL command since SQL Server 2016. The fix will be included soon to the next release for sure :). For the context of my test I modified a little bit the statement generated by the maintenance objects and it worked perfectly:

-- Backup database dbi_tools BACKUP DATABASE [dbi_tools] TO URL = 'https://sqlmidbistorage.blob.core.windows.net/sqlmidbicontainer/sql-mi-dbi.weu157eee5444ccf.database.windows.net_dbi_tools_1_20180611210123.BAK' WITH COPY_ONLY, CHECKSUM, INIT, FORMAT; --Verification of the backup https://sqlmidbistorage.blob.core.windows.net/sqlmidbicontainer/sql-mi-dbi.weu157eee5444ccf.database.windows.net_dbi_tools_1_20180611210123.BAK RESTORE VERIFYONLY FROM URL = 'https://sqlmidbistorage.blob.core.windows.net/sqlmidbicontainer/sql-mi-dbi.weu157eee5444ccf.database.windows.net_dbi_tools_1_20180611210123.BAK' WITH STATS = 100, CHECKSUM;

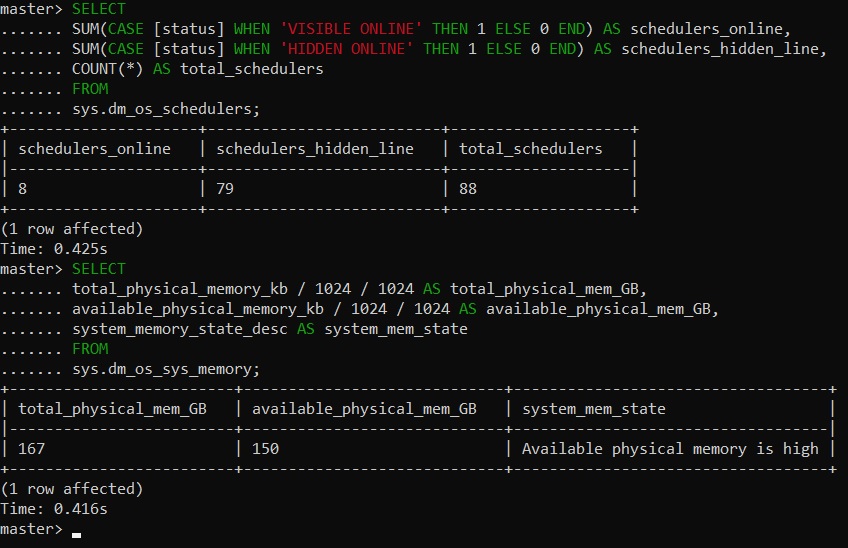

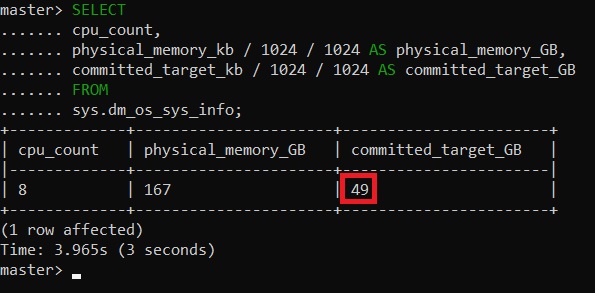

And to finish this blog post as a good DBA, let’s have a look at the resource allocation management. First time I took a look at the resources available on the MI I was very surprised. To get an idea, let’s run some DMVs as sys.dm_os_schedulers, sys.dm_os_sys_memory or sys.dm_os_sys_info DMVs to get a real picture of these aforementioned resources:

Given the number of visible online schedulers only 8 may be used by the MI. This is an expected outcome according to my initial configuration. Concerning the memory configuration, the theorical amount of memory available I can get should be 8 x 7GB = 56GB according the Microsoft documentation and the sys.dm_os_sys_memory DMV doesn’t really indicate such capping while the sys.dm_os_sys_info DMV does (at least closer to the reality)

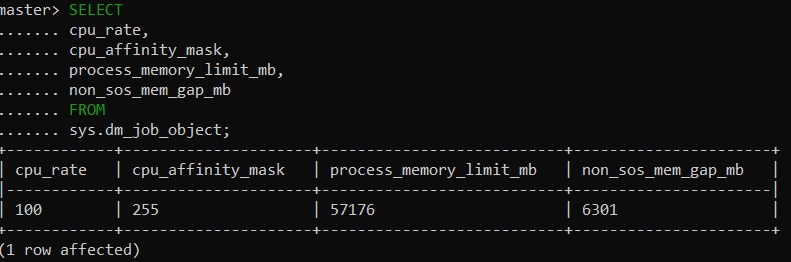

Are the CPU and memory resources managed differently on MI? I found out the right answer in this article from the SQL Server customer advisor team. For MI, this mechanism that is responsible of resource management is called Job Objects. That’s very interesting! Without going into details, this is exactly the same mechanism used by Docker on Windows and it is similar (at least in the concept) to existing CGroups on Linux.

Therefore, we may also benefit from another DMV to get details of resource management:

Thanks to this SQL Server customer advisor team article, the situation becomes clearer with the following parameter values:

- cpu_rate 100% indicates my vCores are used at 100% of their capacity

- cpu_affinity_mask indicates we are limited to 8 OS level processors

- process_memory_limit_mb is self-explanatory and corresponds to my previous theorical assumptions 🙂

- non_sos_mem_gap_mb corresponds to a safe amount of available memory for non-SQLOS activity

Conclusion

I think Microsoft is doing a great strategic play by introducing this solution for customers. Indeed, change is always a challenge and moving towards something almost similar to what we already know allows a smooth transition and a better adoption from humans. We will see what happens!

By David Barbarin

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)