If you followed the last two posts in this little series (here and here) this is the current status of this demo migration project: We have converted the Oracle SH schema and applied it to the target AWS Aurora with PostgreSQL compatibility instance. This worked quite well but a few objects (mostly PL/SQL procedures) would need manual actions. The next and final step is to replicate the data from Oracle to the Aurora instance using AWS DMS (Data Migration Service). As with all logical replications this is a two step process: First a consistent state of the current data needs to be loaded to the target. Once that is completed changes that happened since the load on the source need to be captured and replayed on the target.

Coming back to our current setup:

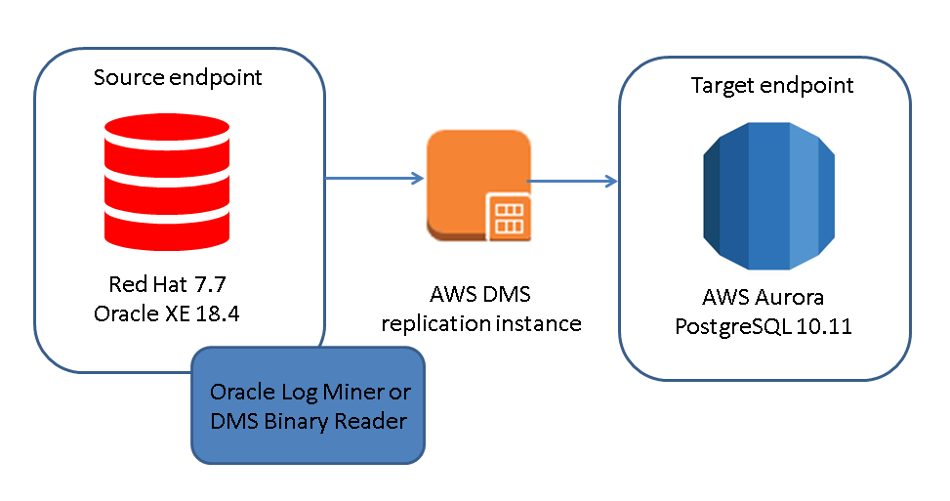

What we do not need anymore is the Windows host, this one was only required for the AWS Schema Conversion Utility (although you can install that on Linux and macOS as well). The target setup will look like this:

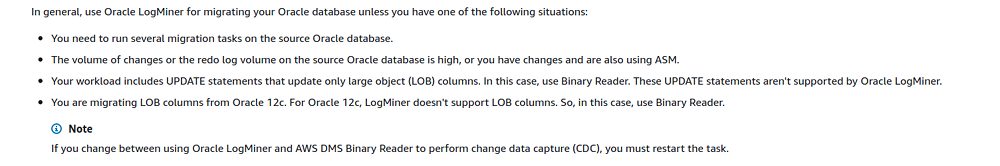

The key point here is the DMS replication instance. This one will connect to a source end point, mines the transaction logs and replays all the changes to the target end point. The replication instance will also take care of the initial load of the data. You have two choices for log mining, either Oracle LogMiner or the AWS DMS Binary Reader which is an AWS implementation for mining the log files. AWS recommends to use Oracle LogMiner in most situation except:

Before we go on and create the replication instance we need to prepare the Oracle instance. The first requirement is, that the Oracle instance need to be in archiving mode:

[oracle@ip-10-0-1-146 ~]$ sqlplus sys/manager@localhost/XE as sysdba SQL*Plus: Release 18.0.0.0.0 - Production on Fri Mar 20 10:46:49 2020 Version 18.4.0.0.0 Copyright (c) 1982, 2018, Oracle. All rights reserved. Connected to: Oracle Database 18c Express Edition Release 18.0.0.0.0 - Production Version 18.4.0.0.0 SQL> shutdown immediate; ... [oracle@ip-10-0-1-146 ~]$ sqlplus / as sysdba SQL*Plus: Release 18.0.0.0.0 - Production on Fri Mar 20 10:48:14 2020 Version 18.4.0.0.0 Copyright (c) 1982, 2018, Oracle. All rights reserved. Connected to an idle instance. SQL> startup mount ORACLE instance started. Total System Global Area 1191181104 bytes Fixed Size 8895280 bytes Variable Size 436207616 bytes Database Buffers 738197504 bytes Redo Buffers 7880704 bytes Database mounted. SQL> alter database archivelog; Database altered. SQL> alter database open; Database altered. SQL> archive log list; Database log mode Archive Mode Automatic archival Enabled Archive destination /opt/oracle/product/18c/dbhomeXE/dbs/arch Oldest online log sequence 2 Next log sequence to archive 4 Current log sequence 4

The second requirement is that supplemental logging needs to be enabled:

SQL> ALTER DATABASE ADD SUPPLEMENTAL LOG DATA; ALTER DATABASE ADD SUPPLEMENTAL LOG DATA * ERROR at line 1: ORA-44608: Supplemental logging settings cannot be modified in Oracle Database Express Edition. SQL> SELECT supplemental_log_data_min FROM v$database; SUPPLEME -------- NO SQL>

So, with our setup this is not possible as Oracle XE 18 does not support supplemental logging. What we can do is to load the complete data, but we can not capture changes. For some migrations that might actually be fine for others the downtime for fully loading the data is not acceptable. So lets see how far we will come.

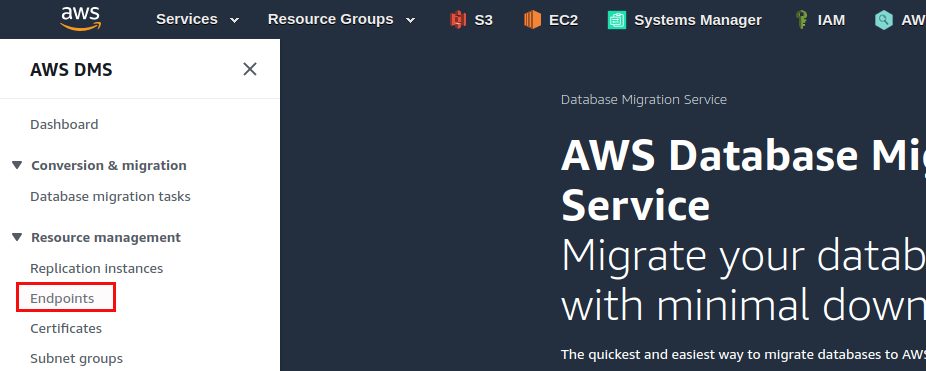

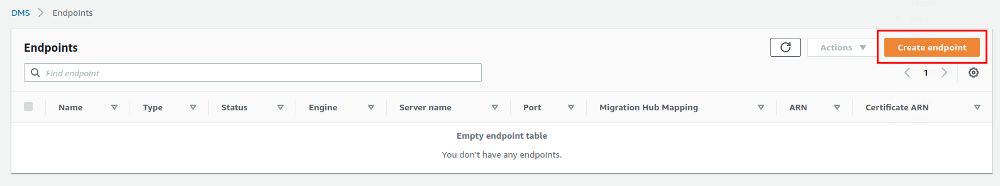

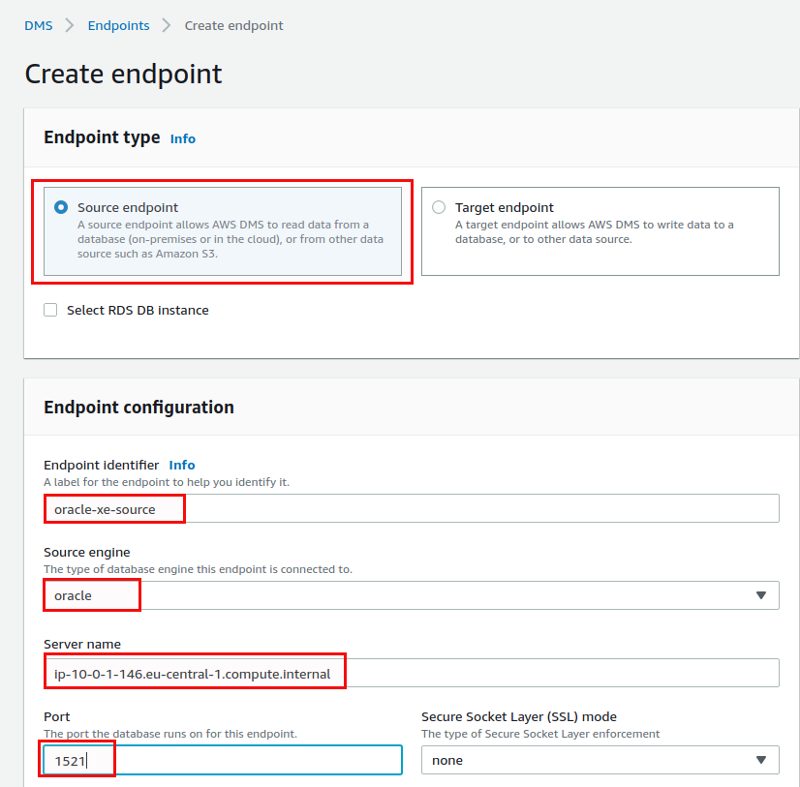

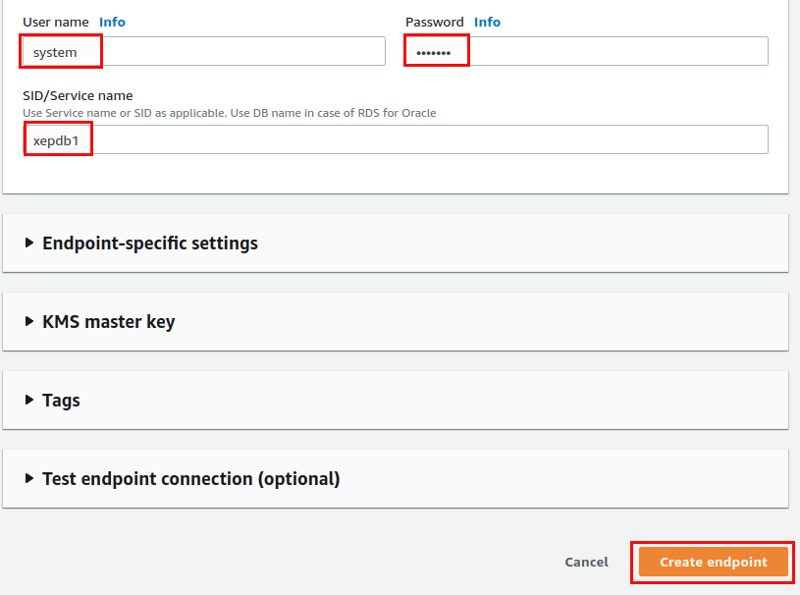

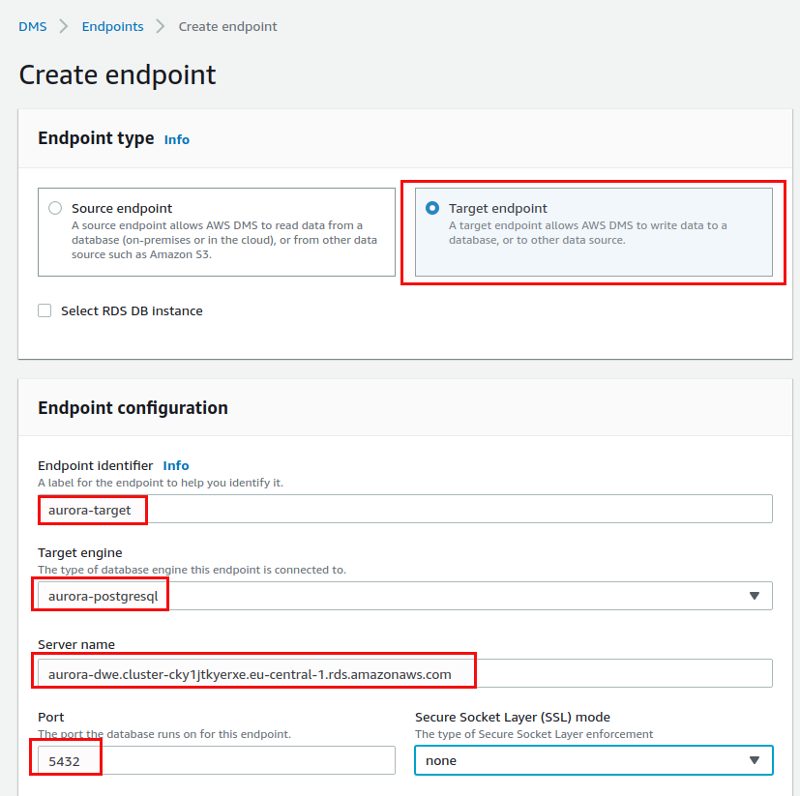

Step one is creating the end points. One for Oracle as the source:

… and the other one for the target:

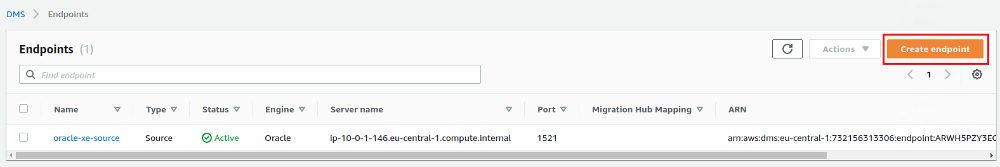

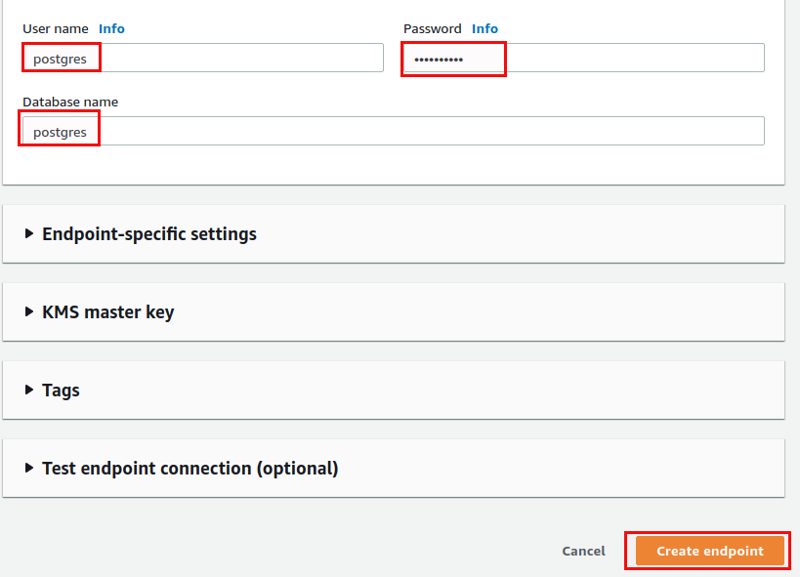

Both end points are now ready:

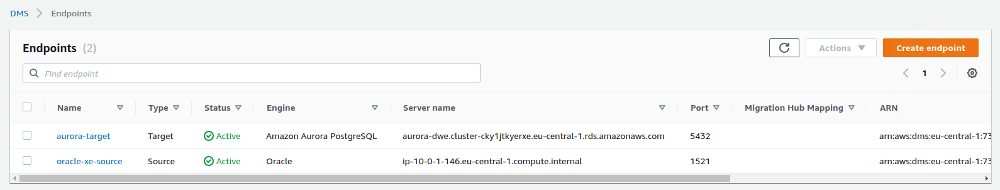

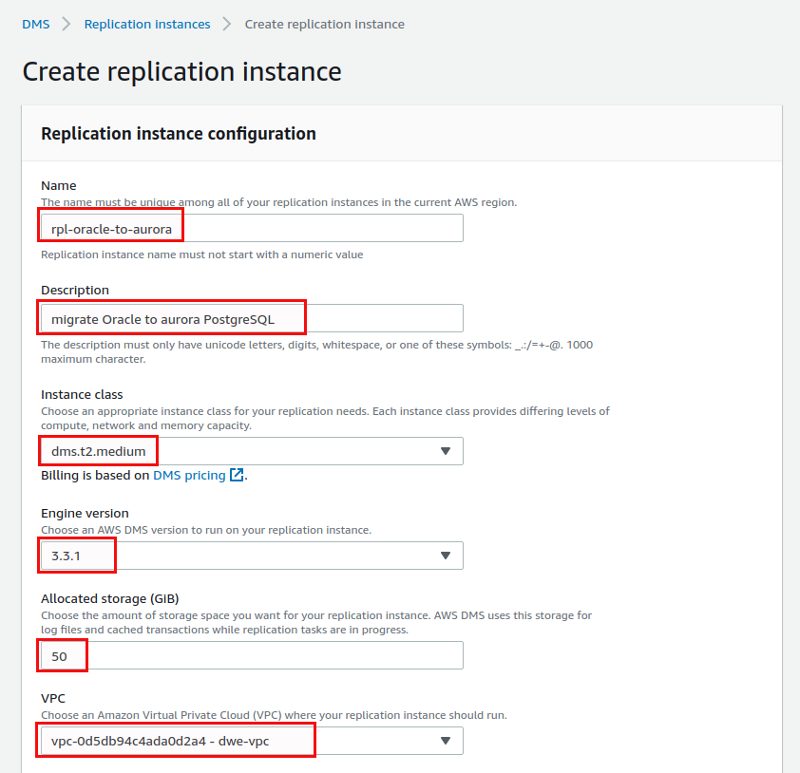

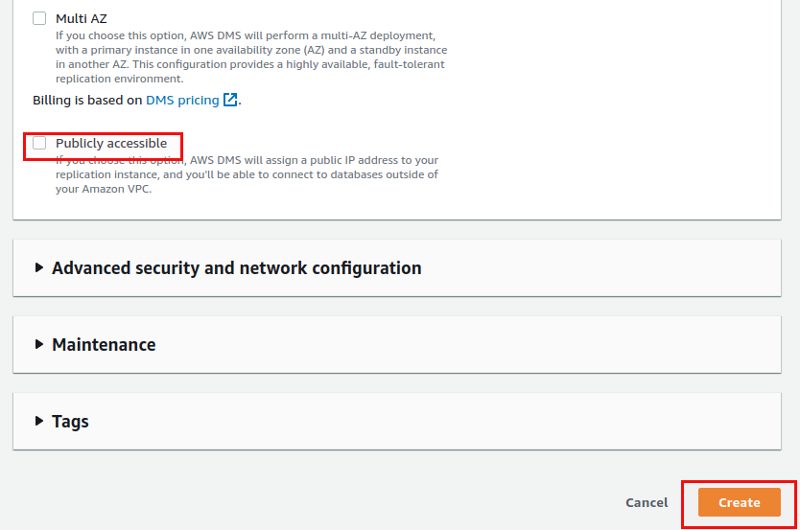

Next we need the replication instance:

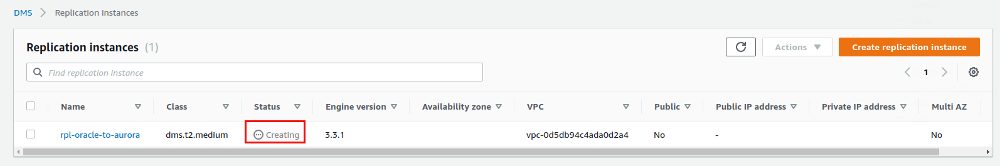

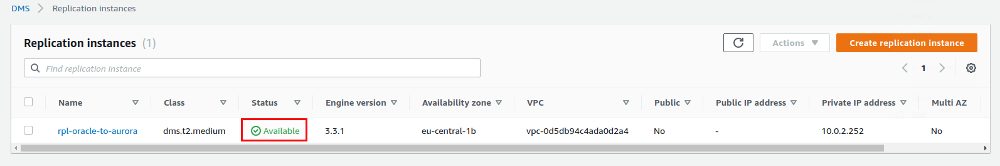

The creation of the replication instance will take some time:

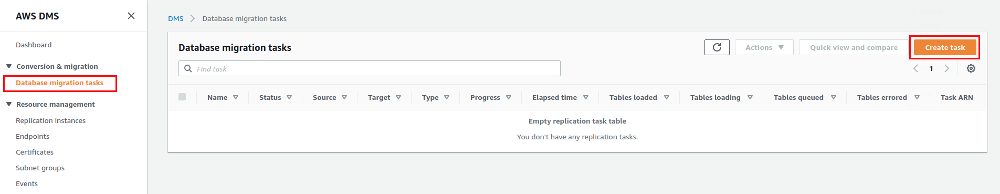

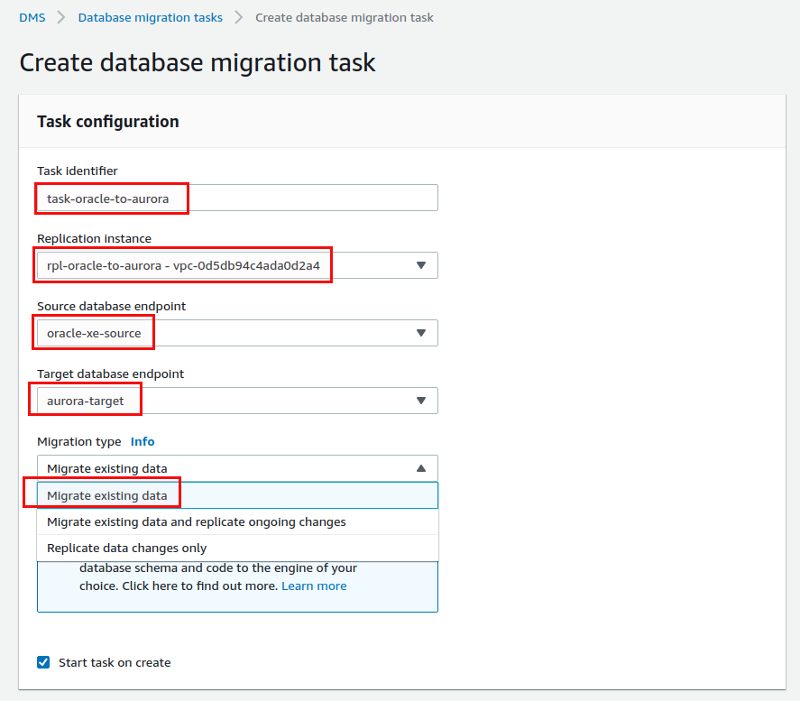

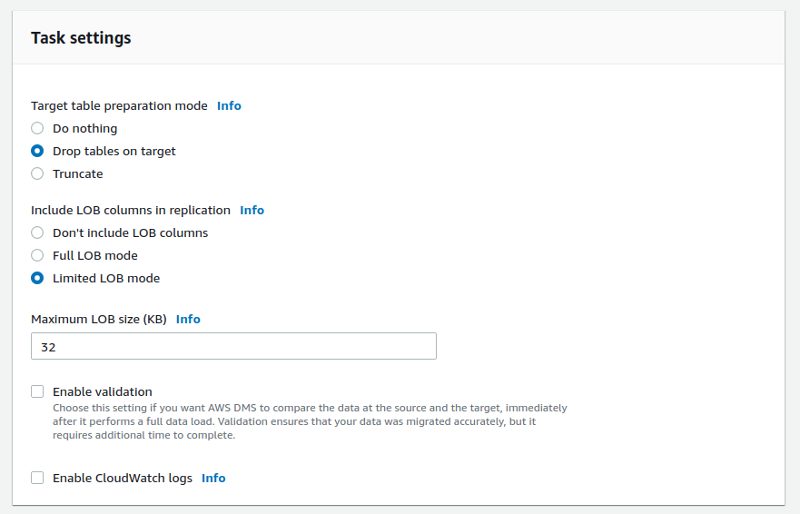

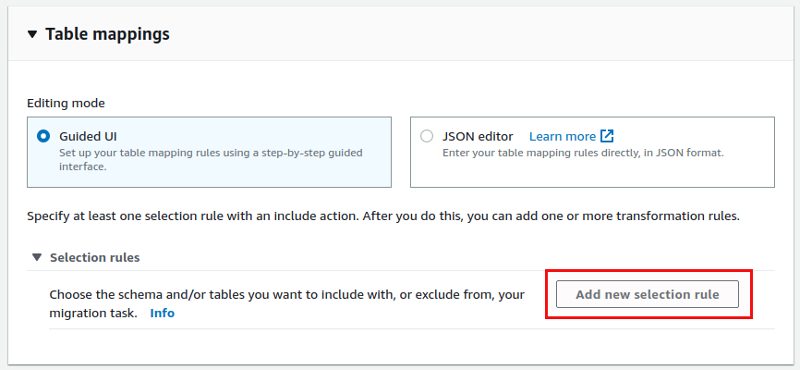

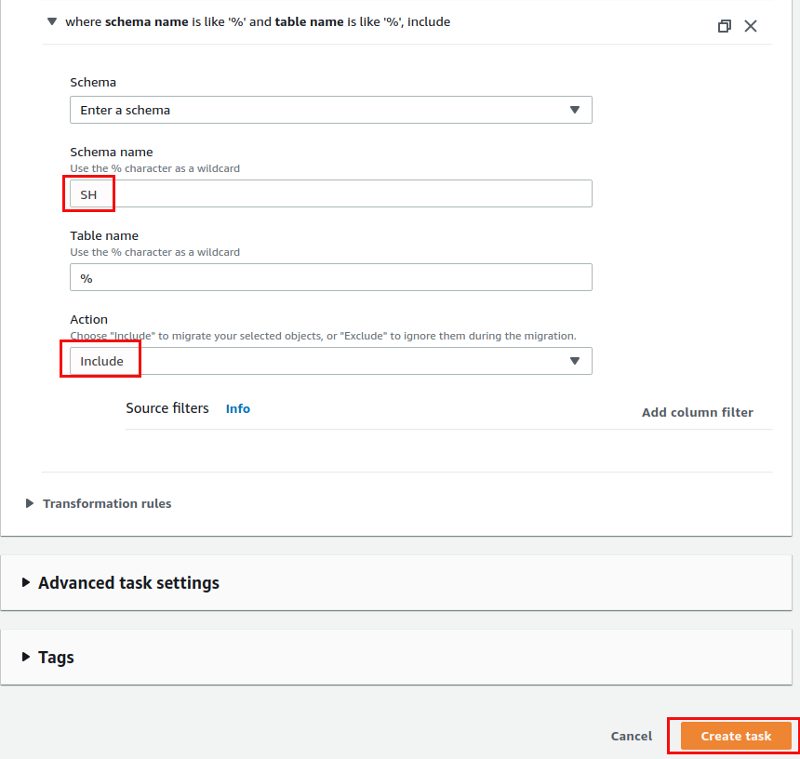

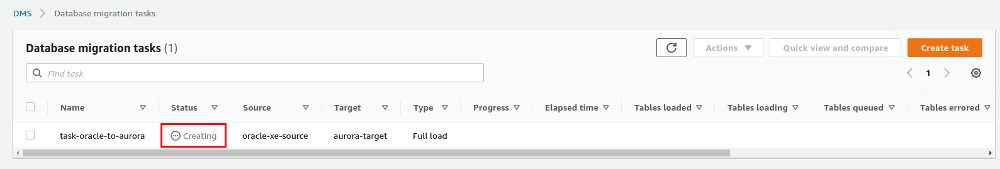

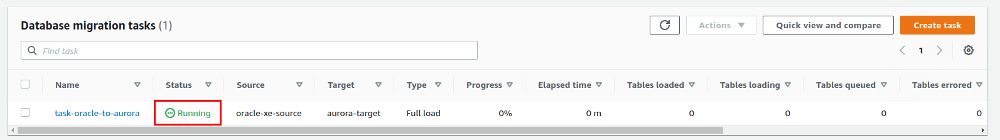

Now we need something that connects the end points with the replication instance and that is the goal of the “Database migration tasks”:

Without log mining we can only go for “Migrate existing data”:

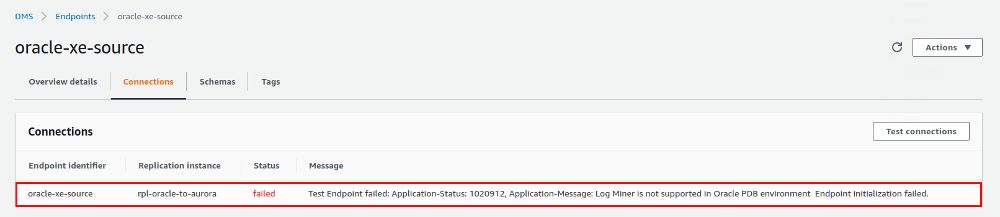

Nothing really happens, and the reason is this:

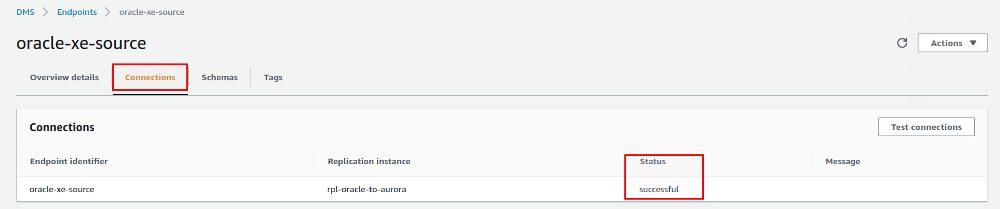

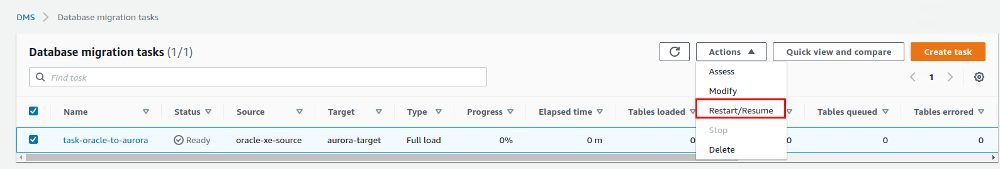

So, with our current setup we can not proceed. What I did is: I took one of our training EC2 instances which runs Oracle 19c, enabled archive mode, supplemental logging and installed the same sample schemas. Now the connection is successful:

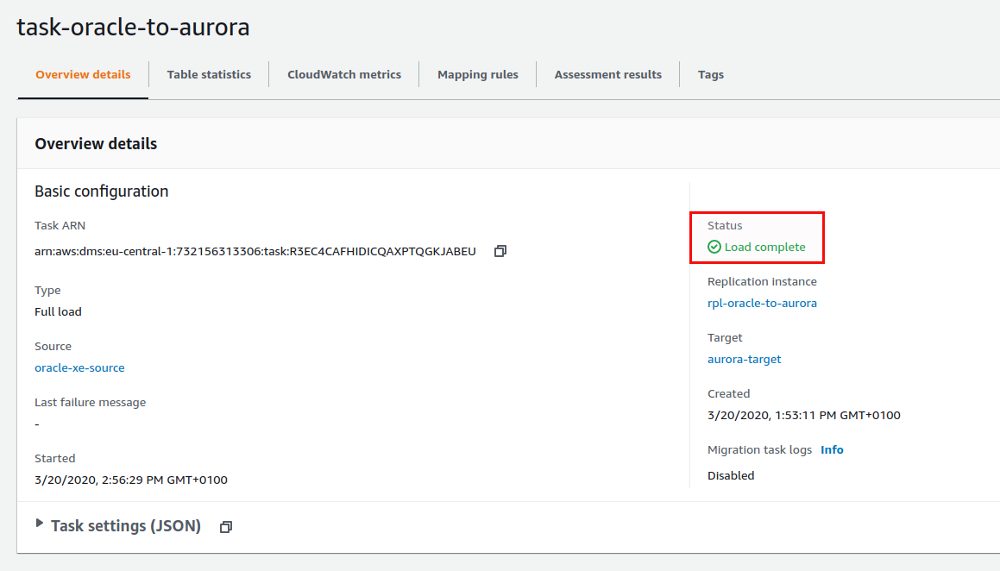

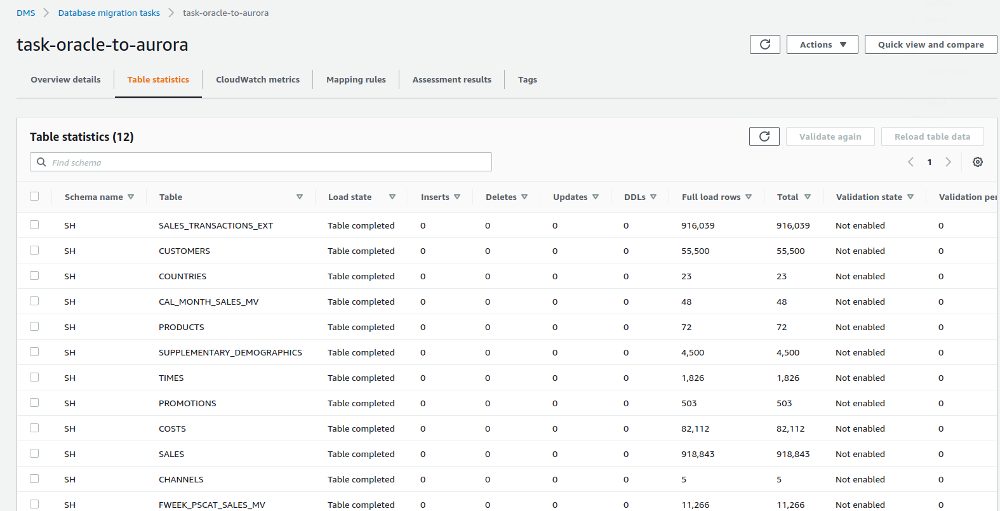

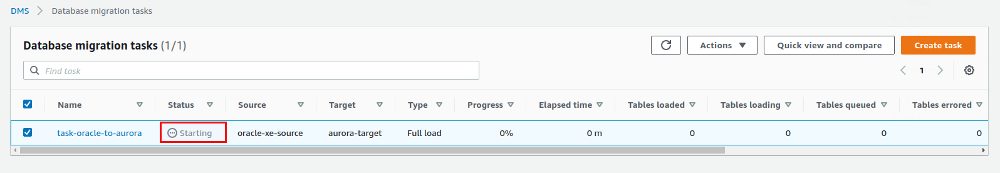

After a few seconds the load is complete:

That was quite easy, lets see if there really is something in the Aurora instance:

postgres=> select * from "SH"."PRODUCTS";

PROD_ID | PROD_NAME | PROD_DESC | PROD_SUBCATEGORY | PROD_SUBCATEGORY_ID | PROD_SUBCATEGORY_DESC | PROD_CATEGORY | PROD_CATEGORY_ID | PROD_CATEGORY_DESC | PROD_WEIG

HT_CLASS | PROD_UNIT_OF_MEASURE | PROD_PACK_SIZE | SUPPLIER_ID | PROD_STATUS | PROD_LIST_PRICE | PROD_MIN_PRICE | PROD_TOTAL | PROD_TOTAL_ID | PROD_SRC_ID | PROD_EFF_FROM | PROD_EFF_TO | PROD_VALID

---------+------------------------------------------------+-----------------------------------------------------+----------------------+---------------------+-----------------------+-----------------------------+------------------+-----------------------------+----------

---------+----------------------+----------------+-------------+-------------+-----------------+----------------+------------+---------------+-------------+---------------------+-------------+------------

13 | 5MP Telephoto Digital Camera | 5MP Telephoto Digital Camera | Cameras | 2044.0000000000 | Cameras | Photo | 204.0000000000 | Photo |

1 | U | P | 1 | STATUS | 899.99 | 899.99 | TOTAL | 1.0000000000 | | 1998-01-01 00:00:00 | | A

14 | 17" LCD w/built-in HDTV Tuner | 17" LCD w/built-in HDTV Tuner | Monitors | 2035.0000000000 | Monitors | Peripherals and Accessories | 203.0000000000 | Peripherals and Accessories |

1 | U | P | 1 | STATUS | 999.99 | 999.99 | TOTAL | 1.0000000000 | | 1998-01-01 00:00:00 | | A

15 | Envoy 256MB - 40GB | Envoy 256MB - 40Gb | Desktop PCs | 2021.0000000000 | Desktop PCs | Hardware | 202.0000000000 | Hardware |

1 | U | P | 1 | STATUS | 999.99 | 999.99 | TOTAL | 1.0000000000 | | 1998-01-01 00:00:00 | | A

16 | Y Box | Y Box | Game Consoles | 2011.0000000000 | Game Consoles | Electronics | 201.0000000000 | Electronics |

1 | U | P | 1 | STATUS | 299.99 | 299.99 | TOTAL | 1.0000000000 | | 1998-01-01 00:00:00 | | A

17 | Mini DV Camcorder with 3.5" Swivel LCD | Mini DV Camcorder with 3.5" Swivel LCD | Camcorders | 2041.0000000000 | Camcorders | Photo | 204.0000000000 | Photo |

1 | U | P | 1 | STATUS | 1099.99 | 1099.99 | TOTAL | 1.0000000000 | | 1998-01-01 00:00:00 | | A

18 | Envoy Ambassador | Envoy Ambassador | Portable PCs | 2022.0000000000 | Portable PCs | Hardware | 202.0000000000 | Hardware |

1 | U | P | 1 | STATUS | 1299.99 | 1299.99 | TOTAL | 1.0000000000 | | 1998-01-01 00:00:00 | | A

Looks fine. Once you get used to the DMS interface and know what the differnent pieces are really there for it is quite easy to get a simple migration going. In the next post we’ll see how chance capture can be configured so that changes on the source are automatically replicated to the target.

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)