When I attended PGIBZ 2019 earlier this year, I talked with Dimitri about pg_auto_failover and I promised to have a look at it. Well, almost half a year later and after we’ve met again at pgconf.eu it is time to actually do that. You probably already know that citudata was acquired by Microsoft earlier this year and that Microsoft seems to be committed to open source since a few years. pg_auto_failover is one of the projects they contribute back to the PostgreSQL community. This will be a multi-blog series and in this very first post it is all about getting it up and running. In a following post we will then look at failover and switchover scenarios.

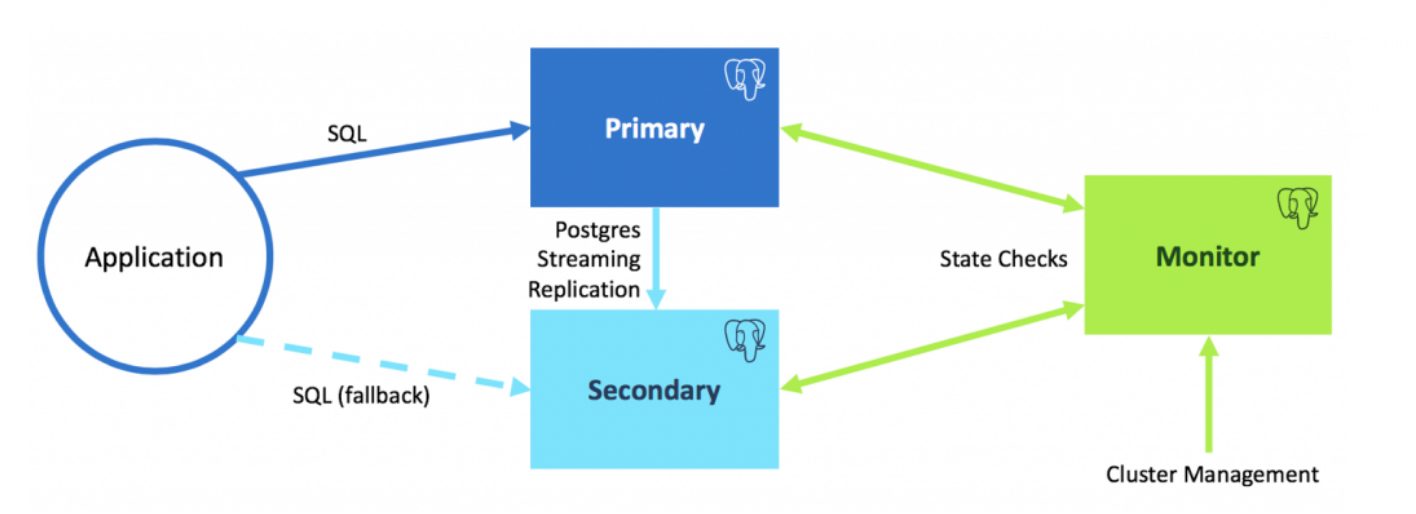

As usual, when you need auto failover you need at least three nodes and pg_auto_failover is no exception to that. The following graphic is stolen from the pg_auto_failover github page:

We have one PostgreSQL master, one PostgreSQL replica and in addition a monitoring host. In may case that maps to:

| pg-af1.ti.dbi-services.com | master | 192.168.22.70 | pg-af2.ti.dbi-services.com | replica | 192.168.22.71 | pg-af3.ti.dbi-services.com | monitor/cluster management | 192.168.22.72 |

All of these nodes run CentOS 8 and I will be going from source code as that gives most flexibility. As pg_auto_failover depends on PostgreSQL (of course) the first step is to install PostgreSQL on all three nodes (PostgreSQL 12 in this setup). If you need further information on how to do that you can e.g. check here. Basically these steps have been executed on all the three nodes (given that the postgres user already exists and sudo is configured):

[postgres@pg-af1 ~]$ sudo dnf install -y gcc openldap-devel python36-devel readline-devel redhat-lsb bison flex perl-ExtUtils-Embed zlib-devel openssl-devel pam-devel libxml2-devel libxslt-devel openssh-clients bzip2 net-tools wget unzip sysstat xorg-x11-xauth systemd-devel bash-completion python36 policycoreutils-python-utils make git

[postgres@pg-af1 ~]$ wget https://ftp.postgresql.org/pub/source/v12.0/postgresql-12.0.tar.bz2

[postgres@pg-af1 ~]$ tar -axf postgresql-12.0.tar.bz2

[postgres@pg-af1 ~]$ cd postgresql-12.0

[postgres@pg-af1 postgresql-12.0]$ sudo mkdir -p /u01 /u02

[postgres@pg-af1 postgresql-12.0]$ sudo chown postgres:postgres /u01 /u02

[postgres@pg-af1 postgresql-12.0]$ PGHOME=/u01/app/postgres/product/12/db_0/

[postgres@pg-af1 postgresql-12.0]$ SEGSIZE=2

[postgres@pg-af1 postgresql-12.0]$ BLOCKSIZE=8

[postgres@pg-af1 postgresql-12.0]$ WALSEGSIZE=64

[postgres@pg-af1 postgresql-12.0]$ ./configure --prefix=${PGHOME} \

> --exec-prefix=${PGHOME} \

> --bindir=${PGHOME}/bin \

> --libdir=${PGHOME}/lib \

> --sysconfdir=${PGHOME}/etc \

> --includedir=${PGHOME}/include \

> --datarootdir=${PGHOME}/share \

> --datadir=${PGHOME}/share \

> --with-pgport=5432 \

> --with-perl \

> --with-python \

> --with-openssl \

> --with-pam \

> --with-ldap \

> --with-libxml \

> --with-libxslt \

> --with-segsize=${SEGSIZE} \

> --with-blocksize=${BLOCKSIZE} \

> --with-systemd \

> --with-extra-version=" dbi services build"

[postgres@pg-af1 postgresql-12.0]$ make all

[postgres@pg-af1 postgresql-12.0]$ make install

[postgres@pg-af1 postgresql-12.0]$ cd contrib

[postgres@pg-af1 contrib]$ make install

[postgres@pg-af1 contrib]$ cd ../..

[postgres@pg-af1 ~]$ rm -rf postgresql*

We will go for an installation from source code of pg_auto_failover as well (again, on all three nodes):

postgres@pg-af1:/home/postgres/ [pg120] git clone https://github.com/citusdata/pg_auto_failover.git postgres@pg-af1:/home/postgres/ [pg120] cd pg_auto_failover/ postgres@pg-af1:/home/postgres/pg_auto_failover/ [pg120] make postgres@pg-af1:/home/postgres/pg_auto_failover/ [pg120] make install postgres@pg-af1:/home/postgres/pg_auto_failover/ [pg120] cd .. postgres@pg-af1:/home/postgres/ [pg120] rm -rf pg_auto_failover/

That’s it, quite easy. What I like especially is, that there are no dependencies on python or any other libraries except for PostgreSQL. What the installation gives us is basically pg_autoctl:

postgres@pg-af1:/home/postgres/ [pg120] pg_autoctl --help pg_autoctl: pg_auto_failover control tools and service usage: pg_autoctl [ --verbose --quiet ] Available commands: pg_autoctl + create Create a pg_auto_failover node, or formation + drop Drop a pg_auto_failover node, or formation + config Manages the pg_autoctl configuration + show Show pg_auto_failover information + enable Enable a feature on a formation + disable Disable a feature on a formation run Run the pg_autoctl service (monitor or keeper) stop signal the pg_autoctl service for it to stop reload signal the pg_autoctl for it to reload its configuration help print help message version print pg_autoctl version

The first step in setting up the cluster is to initialize the monitoring node:

postgres@pg-af3:/home/postgres/ [pg120] pg_autoctl create --help pg_autoctl create: Create a pg_auto_failover node, or formation Available commands: pg_autoctl create monitor Initialize a pg_auto_failover monitor node postgres Initialize a pg_auto_failover standalone postgres node formation Create a new formation on the pg_auto_failover monitor postgres@pg-af3:/home/postgres/ [pg120] sudo mkdir -p /u02/pgdata postgres@pg-af3:/home/postgres/ [pg120] sudo chown postgres:postgres /u02/pgdata postgres@pg-af3:/home/postgres/ [pg120] unset PGDATABASE postgres@pg-af3:/home/postgres/ [] pg_autoctl create monitor --pgdata /u02/pgdata/PG12/af INFO Initialising a PostgreSQL cluster at "/u02/pgdata/PG12/af" INFO /u01/app/postgres/product/12/db_0/bin/pg_ctl --pgdata /u02/pgdata/PG12/af --options "-p 5432" --options "-h *" --waitstart INFO Granting connection privileges on 192.168.22.0/24 INFO Your pg_auto_failover monitor instance is now ready on port 5432. INFO pg_auto_failover monitor is ready at postgres://autoctl_node@pg-af3:5432/pg_auto_failover INFO Monitor has been succesfully initialized.

Once that succeeds you’ll a new PostgreSQL instance running and pg_auto_failover PostgreSQL background worker processes:

postgres@pg-af3:/home/postgres/ [af] ps -ef | grep "postgres:" postgres 5958 5955 0 14:15 ? 00:00:00 postgres: checkpointer postgres 5959 5955 0 14:15 ? 00:00:00 postgres: background writer postgres 5960 5955 0 14:15 ? 00:00:00 postgres: walwriter postgres 5961 5955 0 14:15 ? 00:00:00 postgres: autovacuum launcher postgres 5962 5955 0 14:15 ? 00:00:00 postgres: stats collector postgres 5963 5955 0 14:15 ? 00:00:00 postgres: pg_auto_failover monitor postgres 5964 5955 0 14:15 ? 00:00:00 postgres: logical replication launcher postgres 5965 5955 0 14:15 ? 00:00:00 postgres: pg_auto_failover monitor worker postgres 5966 5955 0 14:15 ? 00:00:00 postgres: pg_auto_failover monitor worker

The initialization of the monitor node also created a new database and two roles:

postgres@pg-af3:/home/postgres/ [af] psql postgres

psql (12.0 dbi services build)

Type "help" for help.

postgres=# \l

List of databases

Name | Owner | Encoding | Collate | Ctype | Access privileges

------------------+----------+----------+-------------+-------------+-----------------------

pg_auto_failover | autoctl | UTF8 | en_US.UTF-8 | en_US.UTF-8 |

postgres | postgres | UTF8 | en_US.UTF-8 | en_US.UTF-8 |

template0 | postgres | UTF8 | en_US.UTF-8 | en_US.UTF-8 | =c/postgres +

| | | | | postgres=CTc/postgres

template1 | postgres | UTF8 | en_US.UTF-8 | en_US.UTF-8 | =c/postgres +

| | | | | postgres=CTc/postgres

postgres=# \du

List of roles

Role name | Attributes | Member of

--------------+------------------------------------------------------------+-----------

autoctl | | {}

autoctl_node | | {}

postgres | Superuser, Create role, Create DB, Replication, Bypass RLS | {}

What we got in the new database is the pgautofailover extension:

pg_auto_failover=# \dx List of installed extensions Name | Version | Schema | Description ----------------+---------+------------+------------------------------ pgautofailover | 1.0 | public | pg_auto_failover plpgsql | 1.0 | pg_catalog | PL/pgSQL procedural language (2 rows)

For our management kit to work properly a few PostgreSQL parameters will be set:

pg_auto_failover=# alter system set log_truncate_on_rotation = 'on'; ALTER SYSTEM pg_auto_failover=# alter system set log_filename = 'postgresql-%a.log'; ALTER SYSTEM pg_auto_failover=# alter system set log_rotation_age = '1440'; ALTER SYSTEM pg_auto_failover=# alter system set log_line_prefix = '%m - %l - %p - %h - %u@%d - %x'; ALTER SYSTEM pg_auto_failover=# alter system set log_directory = 'pg_log'; ALTER SYSTEM pg_auto_failover=# alter system set log_min_messages = 'WARNING'; ALTER SYSTEM pg_auto_failover=# alter system set log_autovacuum_min_duration = '60s'; ALTER SYSTEM pg_auto_failover=# alter system set log_min_error_statement = 'NOTICE'; ALTER SYSTEM pg_auto_failover=# alter system set log_min_duration_statement = '30s'; ALTER SYSTEM pg_auto_failover=# alter system set log_checkpoints = 'on'; ALTER SYSTEM pg_auto_failover=# alter system set log_statement = 'ddl'; ALTER SYSTEM pg_auto_failover=# alter system set log_lock_waits = 'on'; ALTER SYSTEM pg_auto_failover=# alter system set log_temp_files = '0'; ALTER SYSTEM pg_auto_failover=# alter system set log_timezone = 'Europe/Zurich'; ALTER SYSTEM pg_auto_failover=# alter system set log_connections=on; ALTER SYSTEM pg_auto_failover=# alter system set log_disconnections=on; ALTER SYSTEM pg_auto_failover=# alter system set log_duration=on; ALTER SYSTEM pg_auto_failover=# select pg_reload_conf(); pg_reload_conf ---------------- t (1 row)

What we need for the other nodes is the connection string to the monitoring node:

postgres@pg-af3:/home/postgres/ [af] pg_autoctl show uri postgres://autoctl_node@pg-af3:5432/pg_auto_failover

Once we have that we can proceed with creating the master instance on the first host:

postgres@pg-af1:/home/postgres/ [pg120] unset PGDATABASE postgres@pg-af1:/home/postgres/ [] sudo mkdir /u02/pgdata postgres@pg-af1:/home/postgres/ [] sudo chown postgres:postgres /u02/pgdata postgres@pg-af1:/home/postgres/ [] pg_autoctl create postgres --pgdata /u02/pgdata/12/PG1 --nodename pg-af1.it.dbi-services.com --monitor postgres://autoctl_node@pg-af3:5432/pg_auto_failover INFO Found pg_ctl for PostgreSQL 12.0 at /u01/app/postgres/product/12/db_0/bin/pg_ctl INFO Registered node pg-af1.it.dbi-services.com:5432 with id 1 in formation "default", group 0. INFO Writing keeper init state file at "/home/postgres/.local/share/pg_autoctl/u02/pgdata/12/PG1/pg_autoctl.init" INFO Successfully registered as "single" to the monitor. INFO Initialising a PostgreSQL cluster at "/u02/pgdata/12/PG1" INFO Postgres is not running, starting postgres INFO /u01/app/postgres/product/12/db_0/bin/pg_ctl --pgdata /u02/pgdata/12/PG1 --options "-p 5432" --options "-h *" --wait start INFO The user "postgres" already exists, skipping. INFO CREATE DATABASE postgres; INFO The database "postgres" already exists, skipping. INFO FSM transition from "init" to "single": Start as a single node INFO Initialising postgres as a primary INFO Transition complete: current state is now "single" INFO Keeper has been succesfully initialized.

Once the master if up bring up the replica on the second node:

postgres@pg-af2:/home/postgres/ [pg120] pg_autoctl create postgres --pgdata /u02/pgdata/12/PG1 --nodename pg-af2.it.dbi-services.com --monitor postgres://autoctl_node@pg-af3:5432/pg_auto_failover 17:11:42 INFO Registered node pg-af2.it.dbi-services.com:5432 with id 2 in formation "default", group 0. 17:11:42 INFO Writing keeper init state file at "/home/postgres/.local/share/pg_autoctl/u02/pgdata/12/PG1/pg_autoctl.init" 17:11:42 INFO Successfully registered as "wait_standby" to the monitor. 17:11:42 INFO FSM transition from "init" to "wait_standby": Start following a primary 17:11:42 INFO Transition complete: current state is now "wait_standby" 17:11:47 INFO FSM transition from "wait_standby" to "catchingup": The primary is now ready to accept a standby 17:11:47 INFO The primary node returned by the monitor is pg-af1.it.dbi-services.com:5432 17:11:47 INFO Initialising PostgreSQL as a hot standby 17:11:47 INFO Running /u01/app/postgres/product/12/db_0/bin/pg_basebackup -w -h pg-af1.it.dbi-services.com -p 5432 --pgdata /u02/pgdata/12/backup -U pgautofailover_replicator --write-recovery-conf --max-rate 100M --wal-method=stream --slot pgautofailover_standby ... 17:11:49 INFO pg_basebackup: initiating base backup, waiting for checkpoint to complete pg_basebackup: checkpoint completed pg_basebackup: write-ahead log start point: 0/2000028 on timeline 1 pg_basebackup: starting background WAL receiver 0/23699 kB (0%), 0/1 tablespace (/u02/pgdata/12/backup/backup_label ) 136/23699 kB (0%), 0/1 tablespace (/u02/pgdata/12/backup/global/4184 ) 23708/23708 kB (100%), 0/1 tablespace (...data/12/backup/global/pg_control) 23708/23708 kB (100%), 1/1 tablespace pg_basebackup: write-ahead log end point: 0/2000100 pg_basebackup: waiting for background process to finish streaming ... pg_basebackup: syncing data to disk ... pg_basebackup: base backup completed 17:11:49 INFO Postgres is not running, starting postgres 17:11:49 INFO /u01/app/postgres/product/12/db_0/bin/pg_ctl --pgdata /u02/pgdata/12/PG1 --options "-p 5432" --options "-h *" --wait start 17:11:50 INFO PostgreSQL started on port 5432 17:11:50 INFO Transition complete: current state is now "catchingup" 17:11:50 INFO Keeper has been succesfully initialized.

The next step is to start the so called keeper process (this is the process which communicates with the montoring node about state changes):

postgres@pg-af1:/home/postgres/ [] pg_autoctl run --pgdata /u02/pgdata/12/PG1 INFO Managing PostgreSQL installation at "/u02/pgdata/12/PG1" INFO The version of extenstion "pgautofailover" is "1.0" on the monitor INFO pg_autoctl service is starting INFO Calling node_active for node default/1/0 with current state: single, PostgreSQL is running, sync_state is "", current lsn is "0/0". INFO Calling node_active for node default/1/0 with current state: single, PostgreSQL is running, sync_state is "", current lsn is "0/0". INFO Calling node_active for node default/1/0 with current state: single, PostgreSQL is running, sync_state is "", current lsn is "0/0". INFO Calling node_active for node default/1/0 with current state: single, PostgreSQL is running, sync_state is "", current lsn is "0/0".

To integrate that into systemd:

postgres@pg-af2:/home/postgres/ [PG1] pg_autoctl show systemd 20:28:43 INFO HINT: to complete a systemd integration, run the following commands: 20:28:43 INFO pg_autoctl -q show systemd --pgdata "/u02/pgdata/12/PG1" | sudo tee /etc/systemd/system/pgautofailover.service 20:28:43 INFO sudo systemctl daemon-reload 20:28:43 INFO sudo systemctl start pgautofailover [Unit] Description = pg_auto_failover [Service] WorkingDirectory = /u02/pgdata/12/PG1 Environment = 'PGDATA=/u02/pgdata/12/PG1' User = postgres ExecStart = /u01/app/postgres/product/12/db_0/bin/pg_autoctl run Restart = always StartLimitBurst = 0 [Install] WantedBy = multi-user.target postgres@pg-af2:/home/postgres/ [PG1] pg_autoctl -q show systemd --pgdata "/u02/pgdata/12/PG1" | sudo tee /etc/systemd/system/pgautofailover.service [Unit] Description = pg_auto_failover [Service] WorkingDirectory = /u02/pgdata/12/PG1 Environment = 'PGDATA=/u02/pgdata/12/PG1' User = postgres ExecStart = /u01/app/postgres/product/12/db_0/bin/pg_autoctl run Restart = always StartLimitBurst = 0 [Install] WantedBy = multi-user.target postgres@pg-af2:/home/postgres/ [PG1] systemctl list-unit-files | grep pgauto pgautofailover.service disabled 20:30:57 postgres@pg-af2:/home/postgres/ [PG1] sudo systemctl enable pgautofailover.service Created symlink /etc/systemd/system/multi-user.target.wants/pgautofailover.service → /etc/systemd/system/pgautofailover.service.

If you are on CentOS/Red Hat 8 you will also need this as otherwise the service will not start:

postgres@pg-af1:/u01/app/postgres/local/dmk/ [PG1] sudo semanage fcontext -a -t bin_t /u01/app/postgres/product/12/db_0/bin/pg_autoctl postgres@pg-af1:/u01/app/postgres/local/dmk/ [PG1] restorecon -v /u01/app/postgres/product/12/db_0/bin/pg_autoctl

After rebooting all the nodes (to confirm that the systemd service is working as expected) the state of the cluster reports one primary and a secondary/replica as expected:

postgres@pg-af3:/home/postgres/ [af] pg_autoctl show state Name | Port | Group | Node | Current State | Assigned State ---------------------------+--------+-------+-------+-------------------+------------------ pg-af1.it.dbi-services.com | 5432 | 0 | 1 | primary | primary pg-af2.it.dbi-services.com | 5432 | 0 | 2 | secondary | secondary

The various states are documented here.

Remember: As this is based on PostgreSQL 12 there will be no recovery.conf on the replica. The replication parameters have been added to postgresql.auto.conf automatically:

postgres@pg-af2:/u02/pgdata/12/PG1/ [PG1] cat postgresql.auto.conf # Do not edit this file manually! # It will be overwritten by the ALTER SYSTEM command. primary_conninfo = 'user=pgautofailover_replicator passfile=''/home/postgres/.pgpass'' connect_timeout=5 host=''pg-af1.it.dbi-services.com'' port=5432 sslmode=prefer sslcompression=0 gssencmode=disable target_session_attrs=any' primary_slot_name = 'pgautofailover_standby'

That’s it for the setup. Really easy and simple, I like it. In the next post we’ll have a look at controlled switch-overs and fail-over scenarios.

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)