The Initial Release (8.0.0) of Red Hat Enterprise Linux 8 is available since May 2019.

I’ve already blogged about one of its new feature (AppStream) during the Beta version. In this post I will present Stratis, which is a new local storage-management solution available on RHEL8.

Introduction

LVM, fdisk, ext*, XFS,… there is plenty of terms, tools and technologies available for managing disks and file systems on a Linux server. In a general way, setting up the initial configuration of storage is not so difficult, but when it comes to manage this storage (meaning most of the time extend it), that’s where things can get a bit more complicated.

The goal of Stratis is to provide an easy way to work on local storage, from the initial setup to the usage of more advanced features.

Like Btrfs or ZFS, Stratis is a “volume-managing filesystems”. VMF’s particularity is that it can be used to manage volume-management and filesystems layers into one, using the concept of “pool” of storage, created from one or more block devices.

Stratis is implemented as a userspace daemon triggered to configure and monitor existing components :

[root@rhel8 ~]# ps -ef | grep stratis

root 591 1 0 15:31 ? 00:00:00 /usr/libexec/stratisd –debug

[root@rhel8 ~]#

To interact with the deamon a CLI is available (stratis-cli) :

[root@rhel8 ~]# stratis --help

usage: stratis [-h] [--version] [--propagate]

{pool,blockdev,filesystem,fs,daemon} ...

Stratis Storage Manager

optional arguments:

-h, --help show this help message and exit

--version show program's version number and exit

--propagate Allow exceptions to propagate

subcommands:

{pool,blockdev,filesystem,fs,daemon}

pool Perform General Pool Actions

blockdev Commands related to block devices that make up the pool

filesystem (fs) Commands related to filesystems allocated from a pool

daemon Stratis daemon information

[root@rhel8 ~]#

Among the Stratis features we can mention :

> Thin provisioning

> Filesystem snapshots

> Data integrity check

> Data caching (cache tier)

> Data redundancy (raid1, raid5, raid6 or raid10)

> Encryption

Stratis is only 2 years old and the current version is 1.0.3. Therefore, certain features are not yet available such as redundancy for example :

[root@rhel8 ~]# stratis daemon redundancy

NONE: 0

[root@rhel8 ~]#

Architecture

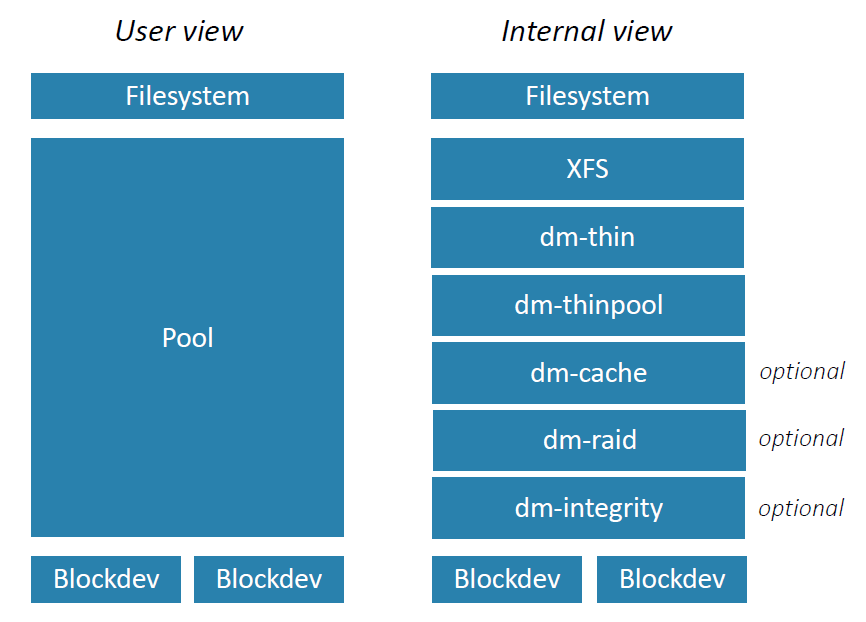

Startis architecture is composed of 3 layers :

Block device

A blockdev is the storage used to make up the pool. That could be :

> Hard drives / SSDs

> iSCSI

> mdraid

> Device Mapper Multipath

> …

Pool

A pool is a set of Block devices.

Filesystem

Filesystems are created from the pool. Stratis supports up to 2^4 filesystems per pool. Currently you can only created XFS filesystem on top of a pool.

Let’s try…

I have a new empty 5G disk on my system. This is the blockdev I want to use :

[root@rhel8 ~]# lsblk /dev/sdb

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sdb 8:16 0 5G 0 disk

[root@rhel8 ~]#

I create pool composed of this unique blockdev…

[root@rhel8 ~]# stratis pool create pool01 /dev/sdb

…and verify :

[root@rhel8 ~]# stratis pool list

Name Total Physical Size Total Physical Used

pool01 5 GiB 52 MiB

[root@rhel8 ~]#

On top of this pool I create a XFS filesystem called “data”…

[root@rhel8 ~]# stratis fs create pool01 data

[root@rhel8 ~]# stratis fs list

Pool Name Name Used Created Device UUID

pool01 data 546 MiB Sep 04 2019 16:50 /stratis/pool01/data dc08f87a2e5a413d843f08728060a890

[root@rhel8 ~]#

…and mount it on /data directory :

[root@rhel8 ~]# mkdir /data

[root@rhel8 ~]# mount /stratis/pool01/data /data

[root@rhel8 ~]# df -h /data

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/stratis-1-8fccad302b854fb7936d996f6fdc298c-thin-fs-f3b16f169e8645f6ac1d121929dbb02e 1.0T 7.2G 1017G 1% /data

[root@rhel8 ~]#

Here the ‘df’ command report the current used and free sizes as seen and reported by XFS. In fact this is the thin-device :

[root@rhel8 ~]# lsblk /dev/mapper/stratis-1-8fccad302b854fb7936d996f6fdc298c-thin-fs-f3b16f169e8645f6ac1d121929dbb02e

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

/dev/mapper/stratis-1-8fccad302b854fb7936d996f6fdc298c-thin-fs-f3b16f169e8645f6ac1d121929dbb02e 253:7 0 1T 0 stratis /data

[root@rhel8 ~]#

This is not very useful, because the real usage of the storage is less due to thin provisioning. And also because Stratis will automatically grow the filesystem if it nears XFS’s currently sized capacity.

Let’s extend the pool with a new disk of 1G…

[root@rhel8 ~]# lsblk /dev/sdc

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sdc 8:32 0 1G 0 disk

[root@rhel8 ~]#

[root@rhel8 ~]# stratis pool add-data pool01 /dev/sdc

…and check :

[root@rhel8 ~]# stratis blockdev

Pool Name Device Node Physical Size State Tier

pool01 /dev/sdb 5 GiB In-use Data

pool01 /dev/sdc 1 GiB In-use Data

[root@rhel8 pool01]# stratis pool list

Name Total Physical Size Total Physical Used

pool01 6 GiB 602 MiB

[root@rhel8 ~]#

A nice feature of Stratis is the possibility to duplicate a filesystem with a snapshot.

For this test I create a new file on the filesystem “data” we just added :

[root@rhel8 ~]# touch /data/new_file

[root@rhel8 ~]# ls -l /data

total 0

-rw-r--r--. 1 root root 0 Sep 4 20:43 new_file

[root@rhel8 ~]#

The operation is straight forward :

[root@rhel8 ~]# stratis fs snapshot pool01 data data_snap

[root@rhel8 ~]#

You can notice that Stratis don’t make a difference between a filesystem and a snapshot filesystem. They are the same kind of “object” :

[root@rhel8 ~]# stratis fs list

Pool Name Name Used Created Device UUID

pool01 data 546 MiB Sep 04 2019 16:50 /stratis/pool01/data dc08f87a2e5a413d843f08728060a890

pool01 data_snap 546 MiB Sep 04 2019 16:57 /stratis/pool01/data_snap a2c45e9a15e74664bab5de992fa884f7

[root@rhel8 ~]#

I can now mount the new Filesystem…

[root@rhel8 ~]# mkdir /data_snap

[root@rhel8 ~]# mount /stratis/pool01/data_snap /data_snap

[root@rhel8 ~]# df -h /data_snap

Filesystem Size Used Avail Use% Mounted on

/dev/mapper/stratis-1-8fccad302b854fb7936d996f6fdc298c-thin-fs-a2c45e9a15e74664bab5de992fa884f7 1.0T 7.2G 1017G 1% /data_snap

[root@rhel8 ~]#

…and check that my test file is here :

[root@rhel8 ~]# ls -l /data_snap

total 0

-rw-r--r--. 1 root root 0 Sep 4 20:43 new_file

[root@rhel8 ~]#

Nice ! But… can I snapshot a filesystem in “online” mode, meaning when data are writing on it ?

Let’s create another snapshot from one session, while a second session is writing on the /data filesystem.

From session 1 :

[root@rhel8 ~]# stratis fs snapshot pool01 data data_snap2

And from session 2, in the same time :

[root@rhel8 ~]# dd if=/dev/zero of=/data/bigfile.txt bs=4k iflag=fullblock,count_bytes count=4G

Once done, the new filesystem is present…

[root@rhel8 ~]# stratis fs list

Pool Name Name Used Created Device UUID

pool01 data_snap2 5.11 GiB Sep 27 2019 11:19 /stratis/pool01/data_snap2 82b649724a0b45a78ef7092762378ad8

…and I can mount it :

[root@rhel8 ~]# mkdir /data_snap2

[root@rhel8 ~]# mount /stratis/pool01/data_snap /data_snap2

[root@rhel8 ~]#

But the file inside seems to have changed (corruption) :

[root@rhel8 ~]# md5sum /data/bigfile.txt /data_snap2/bigfile.txt

c9a5a6878d97b48cc965c1e41859f034 /data/bigfile.txt

cde91bbaa4b3355bc04f611405ae4430 /data_snap2/bigfile.txt

[root@rhel8 ~]#

So, the answer is no. Stratis is not able to duplicate a file system online (at least for the moment). Thus I would strongly recommend to un-mount the filesystem before creating a snapshot.

Conclusion

Stratis is an easy-to-use tool for managing local storage on RHEL8 server. But due to its immaturity I would not recommend to use it in a productive environment yet. Moreover some interesting features like raid management or data integrity check are not available for the moment, but I’m quite sure that the tool will evolve quickly !

If you want to know more, all is here.

Enjoy testing Stratis and stay tuned to discover its evolution…

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/JOC_web-scaled.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)