In this blog I would like to talk about two new features shipped with SQL Server 2016 for AlwaysOn availability groups. The first one concerns the new direct seeding capability and the seconde one introduces distributed availability groups (DAG). I will talk about the second one in a next blog post.

First of all, let’s talk about direct seeding. At the first glance, this feature seems very interesting because it simplifies a lot the process of adding a high available database. Indeed, with previous versions, adding a database to an availability group from an application perspective requires some extra steps from the database administrator in order to be highly available like backup/restore or initializing replication process. Let’s say a software editor wants to install or add a database (SharePoint for instance).

Enabling seeding mode for the concerned replicas reduce the amount of work of adding the databases by automating a little bit more the initialization process. We just have to add the database to the availability group and it’s over: no extra backup / restore tasks, no need to configure a file share for backup / restore and no manual initialization. Of course, CREATE and ALTER AVAILABILITY GROUP syntax includes a new per replica basis parameter named SEEDING_MODE that has two settings: MANUAL and AUTOMATIC. The former means that we will use the legacy method ton initialize databases to secondaries (by using backup / restore) and the latter will use the new automated initialization method that will consist in seeding database data across the replication network.

Let’s have a quick demo of this new feature. I will configure a basic availability group with two replicas (WIN20121SQL16\SQL16 and WIN20122SQL16\SQL16). You may notice the new parameter SEEDING_MODE = AUTOMATIC meaning that we will use the new automated method for initializing databases for this availability group. You may also notice that we don’t need any more to create a “dummy” database before creating an availability group.

On the primary:

-- primary replica

CREATE AVAILABILITY GROUP [testGrp]

FOR

REPLICA ON

N'WIN20121SQL16\SQL16'

WITH (ENDPOINT_URL = N'TCP://WIN20121SQL16.dbi-services.test:5022',

FAILOVER_MODE = AUTOMATIC,

AVAILABILITY_MODE = SYNCHRONOUS_COMMIT,

BACKUP_PRIORITY = 50,

SECONDARY_ROLE(ALLOW_CONNECTIONS = NO),

SEEDING_MODE = AUTOMATIC)

,

N'WIN20122SQL16\SQL16'

WITH (ENDPOINT_URL = N'TCP://WIN20122SQL16.dbi-services.test:5022',

FAILOVER_MODE = AUTOMATIC,

AVAILABILITY_MODE = SYNCHRONOUS_COMMIT,

BACKUP_PRIORITY = 50,

SECONDARY_ROLE(ALLOW_CONNECTIONS = NO),

SEEDING_MODE = AUTOMATIC);

GO

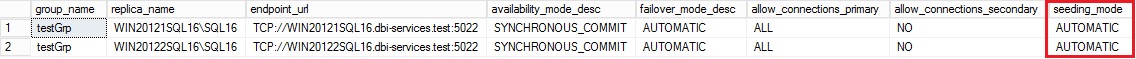

System views and DMVs have been updated accordingly. For example the sys.availability_replicas provides a new seeding_mode column.

select ag.name as group_name, ar.replica_server_name as replica_name, ar.endpoint_url, ar.availability_mode_desc, ar.failover_mode_desc, ar.primary_role_allow_connections_desc as allow_connections_primary, ar.secondary_role_allow_connections_desc as allow_connections_secondary, ar.seeding_mode_desc as seeding_mode from sys.availability_replicas as ar join sys.availability_groups as ag on ar.group_id = ag.group_id; go

Let’s complete the configuration of the availability group by giving gextra permissions to create databases on secondaries to the availability group itself.

ALTER AVAILABILITY GROUP [testGrp] JOIN ALTER AVAILABILITY GROUP [testGrp] GRANT CREATE ANY DATABASE GO

At this point we just have to add the new WideWorldImporters database to the testGrp availability group and our job is over!

-- primary replica add database WideWorldImporters to testGrp ALTER AVAILABILITY GROUP [testGrp] ADD DATABASE [WideWorldImporters]; GO

The system will then seed database data to the secondary. We may get information of seeding progress from two new DMVs sys.dm_hadr_automatic_seeding and sys.dm_hadr_physical_seeding_stats

sys.dm_hadr_automatic_seeding DMV gives information about successful or failed database seedings and corresponding error messages. Furthermore sys.dm_hadr_physical_seeding_stats DMV provides currently running seeding information like estimated completion time and related statistics about I/O and network usage.

Here what I found after adding the database to the availability group:

select ag.name as aag_name, ar.replica_server_name, d.name as database_name, has.current_state, has.failure_state_desc as failure_state, has.error_code, has.performed_seeding, has.start_time, has.completion_time, has.number_of_attempts from sys.dm_hadr_automatic_seeding as has join sys.availability_groups as ag on ag.group_id = has.ag_id join sys.availability_replicas as ar on ar.replica_id = has.ag_remote_replica_id join sys.databases as d on d.group_database_id = has.ag_db_id

![]()

select local_physical_seeding_id, remote_physical_seeding_id, local_database_name, @@servername as local_machine_name, remote_machine_name, role_desc as [role], transfer_rate_bytes_per_second, transferred_size_bytes / 1024 as transferred_size_KB, database_size_bytes / 1024 as database_size_KB, DATEADD(mi, DATEDIFF(mi, GETUTCDATE(), GETDATE()), start_time_utc) as start_time, DATEADD(mi, DATEDIFF(mi, GETUTCDATE(), GETDATE()), estimate_time_complete_utc) as estimate_time_complete, total_disk_io_wait_time_ms, total_network_wait_time_ms, is_compression_enabled from sys.dm_hadr_physical_seeding_stats

![]()

The WideWorldImportes database is not so big by default (roughly 455MB). The estimated duration to seed database is approximatively 1’14’’.

Let’s compare this little math by including the transfer rate that is estimated to ~ 42MB/s (transfer_rate_bytes_per_second column value) and the total size of data to transfer. We may deduce 455 / 42 ~=10s to transfer all the data.

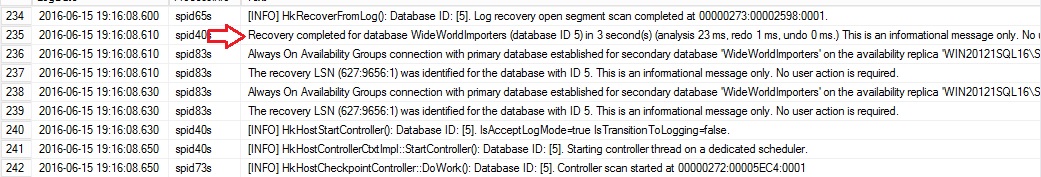

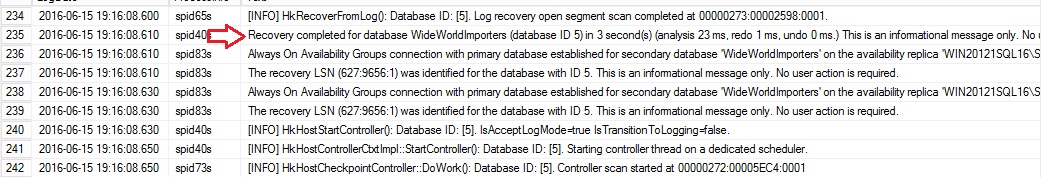

Let’s now have a look at the SQL error log on the secondary. We may see information related to the seeding data operation.

…

The seeding operation has occurred between 19:15:53 and 19:16:08 so approximatively 15 seconds that is not so far to our previous math. The estimated duration to seed data based on start and estimated completion time seems to be less accurate than the transfer throughput but I think I need to perform further tests and investigations before coming to any conclusion.

In short, a pretty cool feature but my guess is that using direct seeding may not be suitable for all scenarios. One customer example that is off the top of my head is big databases that run inside availability groups. I had the opportunity to migrate SAP databases (~1TB) to SQL Server 2014 and availability groups : using direct seeding would not be use in this case. Indeed, direct seeding does not use compression by default (you can turn on compression with traceflag 1462) and we may easily imagine that seeding all data across the network may take a long time. We may change this default behaviour by using a special trace flag but at the cost of high CPU consumption. It is probably not a good idea if we plan to use the application immediately after adding the SAP to the concerned availability group. In addition, using log stream compression may hurt performance with heavily OLTP workload because it introduces latency by design. There is a tradeoff to find here … Therefore using backup / restore process seems to be the best option by playing with optimization techniques like compression, increasing the number of backup media files or changing MAXTRANSFERSIZE and BUFFERCOUNT parameter values.

One another scenario that comes to mind concerns databases migration with thousands of databases at the same time. Which is the fastest way to migrate all these databases? Adding them to the availability group and let the system to seed database data across the network to each secondary or perform a copy of database files on each secondary and chose skip initial data synchronization? At this point I need to investigate further to answer.

One another important thing is that direct seeding is not part of the availability group wizard GUI. You have probably noticed that I don’t use it in this blog post and this is an intended behaviour. A connect item has already been created by Brent Ozar here. Please feel free to vote!

See you!

By David Barbarin

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)