I spent some times to write blog posts about SQL Server on Docker since few months and you likely noticed it concerned mainly SQL Server on Linux. But what about SQL Server on Docker and Windows? If you take a look at the Docker images provided by Microsoft, only 2017 version is available and it is only intended for development and testing use. Versions prior 2017 seem to not be supported so far (but I don’t have in mind the Microsoft plan on this topic) and if you want to use containers with older SQL Server versions you have to start from scratch by yourself. This is not a hard task but I have in mind a discussion with one of my customers about the maintenance of SQL Server images prior 2017 and he told me he didn’t allocate resources to create and maintain such images by himself.

In addition, I recently presented at different events including our dbi services internal event for customers the internal project we are managing about our DMK maintenance module tool for SQL Server. To cut the story short, this tool aims to provide smart maintenance capabilities for customer databases including backups, index, statistics etc …

Over the time, the code grew up and we had to support different versions of SQL Server from 2008 to SQL Server 2017. In the same time, the number of scenarios we have to manage and to test regarding the new features shipped with service packs and cumulative updates as well increased drastically over the time. So we decided to move on docker containers from different obvious reasons:

- Containers are easy to provision for unit testing

- Lower footprint on our local machine

- We can share easily SQL images between members of our “development” team in a private registry

- We will able to integer containers in a potential “future” CI pipeline

In our context, each developer has a local docker engine installed on a Windows 10 Pro laptop but for medium/large companies, internal rules may prevent installing such product on each individual developer laptop. Therefore, they prefer likely to provide a dedicated environment for developers that meet the internal rules and to keep the focus on flexibility.

In such shared infrastructure a main concern will be probably disk space issues because of the potential testing database(s) size and the number of containers provisioned at the same time. Let’s say that several developers would like to provision one SQL Server instance each with a testing database attached on it and that comes from the production through a sanitized database pipeline. The total size of this database is enough big to warn about a potential disk space issue because each instanced container will include its own image size (roughly 10GB with SQL Server containers on Windows) + the size of the testing database – let’s say more than 50 GBs and likely more for usual cases.

If you’re in one of the aforementioned scenarios, Windocks may be a good alternative scenario.

During this month I had the opportunity to test the product in the context of our internal project and after some experimentation stuff I admit I was agreeably surprised by some built-in capabilities as:

- Windows authentication mode supported

- SQL Server database cloning capabilities

- The ability to use both Docker CLI and Web UI (even if I’m in favor of Docker CLI)

- The ability to refresh a cloned database image from a differential backup

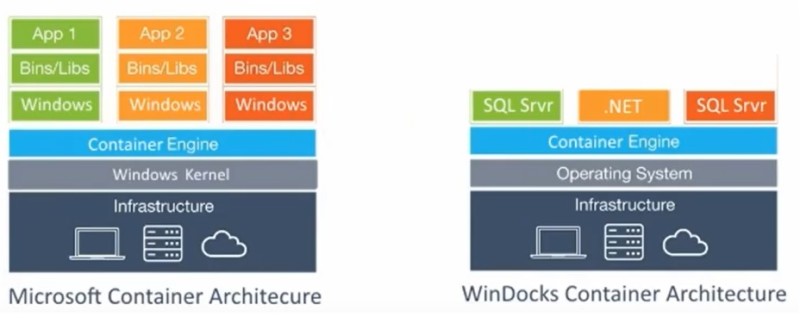

Before to dig further into the above features let’s just take few seconds to see the Windocks architecture design

We may notice some differences here. With a traditional Docker architecture, containers run on shared operating system kernel (either Windows or Linux) whereas Windocks is a strictly application construct-oriented solution and requires installing a SQL Server instance on the host as base instance for SQL Server containers. This is a fundamental change that provides some interesting advantages:

- We don’t have to rebuild the containers to get OS & Framework patches through base image updates because containers rely only on the SQL Server base instance

- We don’t break compatibility with some Windows storage functionalities as VSS and SQL Writer as well if you rely on them with third-party backup tools

- We benefit from the underlying security and configuration of the SQL Server based instance meaning we may use Windows authentication for instance

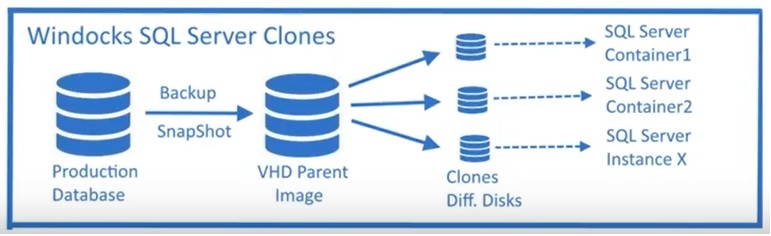

Let’s talk now about one interesting feature shipped with Windocks that is cloned databases. Here a picture (from Windocks) that shows a high-level workflow of using SQL Server containers with cloned databases.

The starting point is a full database backup or a snapshot and Windocks will generate the corresponding VHD parent image of the backup media. Then each SQL Server container generated will use a writable clone of this parent image reducing drastically the disk footprint of each container (at least when the SQL Server container is generated). This is a common workflow that customers may implement for unit testing or for CI/CD pipeline to refresh development environments. The production database may be big in size and in this context the interest of using cloned databases becomes obvious. Another way provided would be to rely on mounted snapshot-based volumes from the storage provider but at this stage I didn’t test it. Maybe a next time!

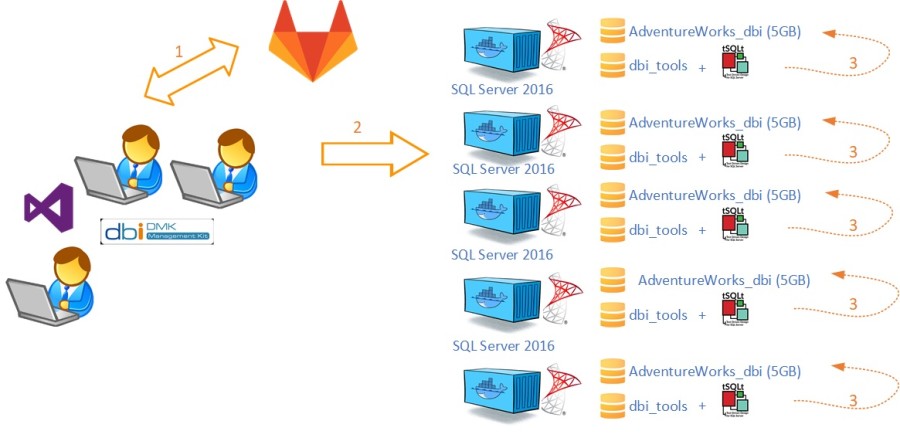

To give a better picture of what it is possible to do with cloned databases, let me show you one way to implement it in the context of our DMK maintenance tool project. The development workflow of the development project is as follows:

We use SSDT and GitLab to manage our DMK maintenance tool sources and we perform unit testing by provisioning one or several SQL Server containers regarding the target version we want to validate at this moment. Furthermore we developed testing scenarios based on tSQLt framework we run after provisioning the SQL Server containers. With such architecture, we initially have to maintain images of different SQL Server versions and each time we want to create a container we have to attach one copy of the customized AdventureWorks_dbi database. Let’s say we want to work with 4 containers (SQL Server 2016) at time. We must to ensure we have sufficient disk space for 4 copies of this database (5 x 4 = 20GB) + space required for each container (10GB x 4 = 40GB) = 60GB. The dbi_tools database is intended to stay very small (512MB up to 1GB) comparing to other testing components, this is way I didn’t include it to the math.

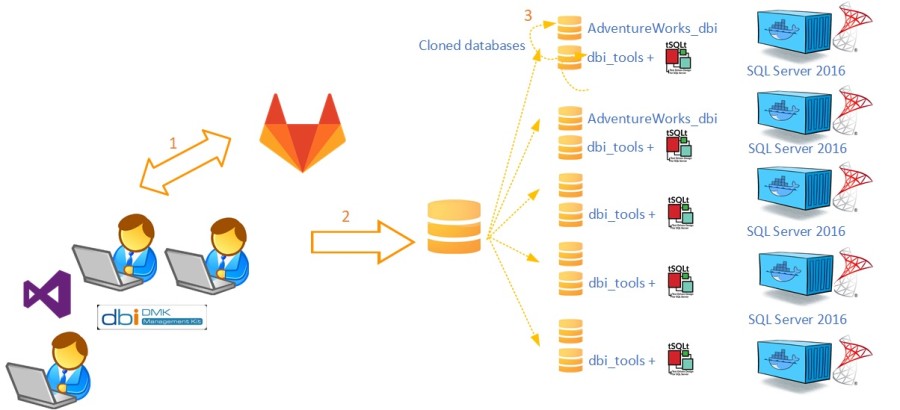

Let’s now apply Windocks with the above context and the new architecture becomes as follows:

In this second scenario, we may include both the AdventureWorks_dbi and dbi_tools databases (including tSQLt framework objects) in a VHD parent image. For the demo, I used a SQL Server 2016 instance installed on the host that will be controlled by Windocks service during the container generation.

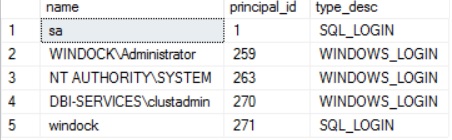

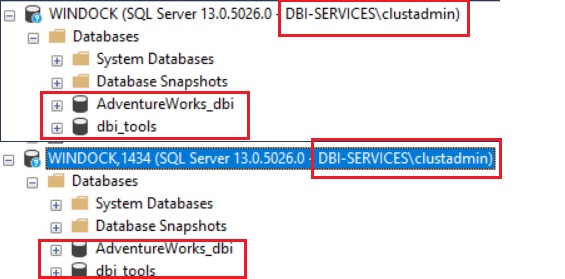

As I said previously we may rely on the configuration and the security of the base instance. Thus, I configured my security based on both Windows Authentication (dbi-services\clustadmin domain account) for SQL Server sysadmins and SQL authentication for development purpose (windock user).

In terms of configuration, tSQLt framework requires enabling CLR on the server level, so I changed the configuration directly on the SQL based instance to allow all my containers to inherit this configuration change.

SELECT

[name],

principal_id,

[type_desc]

FROM

sys.server_principals

WHERE

[type] IN ('U', 'S')

AND [name] NOT LIKE '##MS_%##'

AND [name] NOT LIKE 'NT SERVICE%'

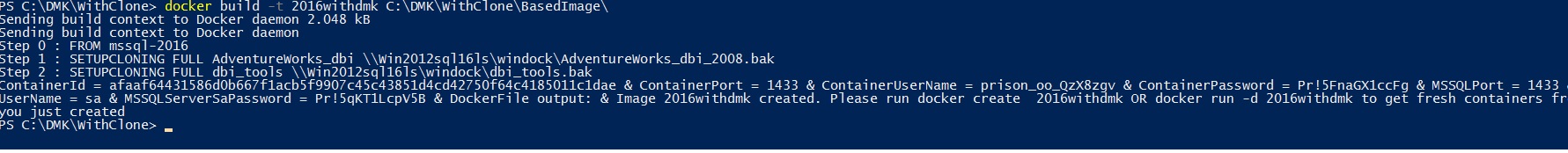

Here the content of my docker file.

FROM mssql-2016 SETUPCLONING FULL AdventureWorks_dbi \\Win2012sql16ls\windock\AdventureWorks_dbi_2008.bak SETUPCLONING FULL dbi_tools \\Win2012sql16ls\windock\dbi_tools.bak

You may notice some new commands here:

- FROM mssql-2016 indicates we will use the SQL Server 2016 instance as base image.

- SETUPCLONING FULL indicates to generate the VHD parent image that we will include both the AdventureWorks_dbi and dbi_tools databases in a remote network path

Go ahead and let’s generate the corresponding SQL Server docker cloned image with the special -t flag

docker build -t 2016withdmk C:\DMK\WithClone\BasedImage\

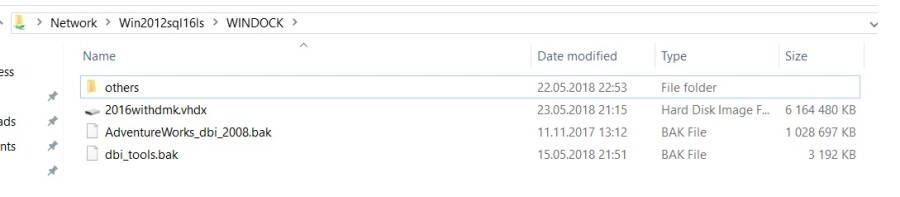

The process may take some times to generate the VHD parent image depending on the different database sizes and the location (local fast disk, network share bandwidth etc …)

As expected, because the VHD parent image is a full byte copy of the data, the size is basically the sum of both AdventureWorks_dbi and dbi_tools database sizes.

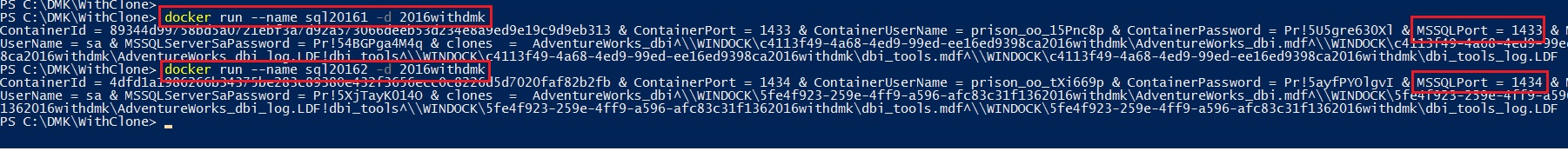

Compared to a traditional approach, the provisioning of the associated containers will be faster irrespective to database size. Let’s create 2 containers from the image generated previously with the following docker commands:

PS C:\DMK\WithClone> docker run --name sql20161 -d 2016withdmk PS C:\DMK\WithClone> docker run --name sql20162 -d 2016withdmk

Note that by default, Windocks will choose a random port between a configured range in the node.conf (START_PORT/ PORTS_PER_USER) unless you override the default behavior using the -p parameter.

Let’s get a picture of the existing containers. My 2 containers have been generated correctly from the 2016withdmk base image.

PS C:\DMK\WithClone> docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS 89344d99758b 2016withdmk "" 5 minutes ago Started 1433/ 4dfd1a198626 2016withdmk "" 4 minutes ago Started 1434/

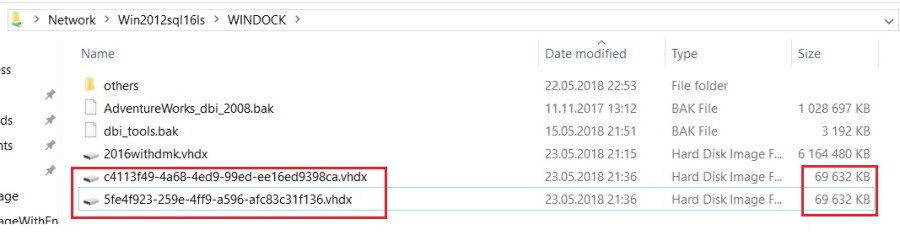

Let’s take another look at the storage side:

The interesting point here is the two differential disks created during the creation of my 2 previous containers are very small size (~70MB per container).

Let’s try to connect from SSMS to the new fresh containers:

It works and did you notice I was connected with my domain account? 🙂

Last topic I wanted to share with you is the Windocks capability to update a base image from differential backups. In a real context, you may need to refresh frequently your environment with recent data for developers and regarding your full backup size it might be advantageous to deal with differential backups.

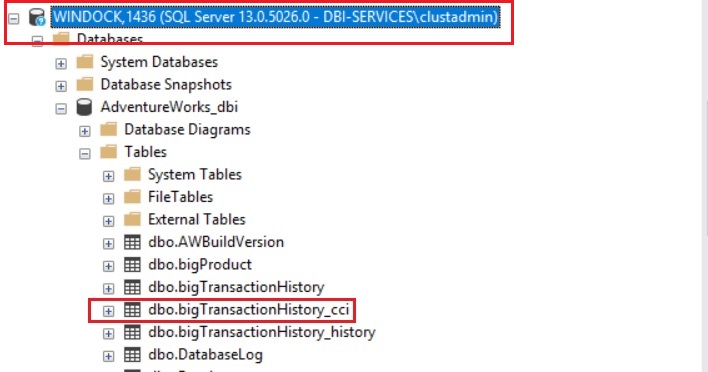

Let’s consider this process with my internal project environment. During the development process we already had to scale the database schema of our testing database with the features shipped with new versions, service packs or cumulative update over the time. Let’s say we have to add a new dbo.bigTransactionhistory_cci table to test columnstore index scenarios. We first add the concerned table. Then we will perform a differential backup and finally we will update the 2016withDMK base image with it. Obviously in the context of my demo, the database size is likely not big enough to take full advantage of this feature but I trust you to draw a comparison with a more realistic scenario.

The image I want to update is named 2016withdmk. Note the mssql-xxx images that are in fact images from SQL Server base instances installed on my host server.

PS C:\DMK\WithClone> docker images REPOSITORY TAG IMAGE ID CREATED VIRTUAL SIZE 2016withdmk none b3a249ba-2cf Less than a second ago 0 B agitated_heisenberg none bbd0ce26-4bb Less than a second ago 0 B dotnet-4.5 none dotnet-4.5 3 years ago 0 B windows none windows 3 years ago 0 B mssql-2008r2 none mssql-2008r2 3 years ago 0 B mssql-2016 none mssql-2016 3 years ago 0 B mssql-2014 none mssql-2014 3 years ago 0 B

My new docker file content to update the 2016withdmk base image is as follows.

FROM 2016withdmk SETUPCLONING DIFF AdventureWorks_dbi \\Win2012sql16ls\windock\AdventureWorks_dbi_2008.DIFF SETUPCLONING DIFF dbi_tools \\Win2012sql16ls\windock\dbi_tools.DIFF

I used the differential backup of the AdventureWorks_dbi database with the SETUPCLONNING DIFF command.

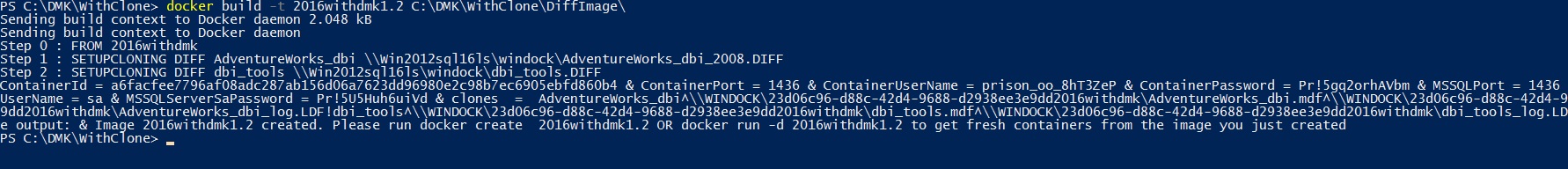

Let’s start updating the 2016withDMK base image with the following docker command (I tagged my new image with 1.2 suffix):

docker build -t 2016withdmk1.2 C:\DMK\WithClone\DiffImage\

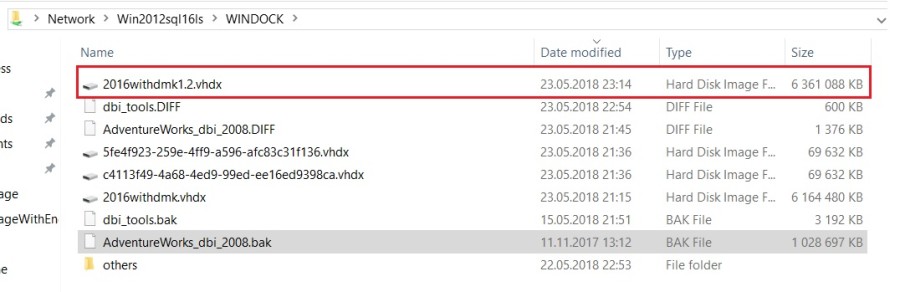

Although using a differential backup may allow for substantial productivity keep in mind that updating a VHD parent image will require creating an additional VHD parent image that will become another full byte copy of the environment as shown below:

After running the same docker run command exposed before, let’s get a picture of the running on my server. The container id 789ce49562d0 is the new fresh container with updated data (2016withdmk1.2 image).

PS C:\DMK\WithClone> docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS 89344d99758b 2016withdmk "" About an hour ago Started 1433/ 4dfd1a198626 2016withdmk "" About an hour ago Started 1434/ 789ce49562d0 2016withdmk1.2 "" 2 minutes ago Started 1436/

I may retrieve the new dbo.bigTransactionHistory_cci after connecting to the concerned SQL Server instance. I’m now able to update and to execute tSQLt scenarios to test columnstore index maintenance scenarios!

In this blog post we’ve just surfaced some capabilities and possibilities provided by Windocks. There are other interesting features as encrypted password in the docker file (as we may use with Docker Swarm for instance), the support of TDE databases or lastly the ability to manage SQL Server on Linux. I will probably blog about it in the near future. Stay tuned!

By David Barbarin

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)